?Mathematical formulae have been encoded as MathML and are displayed in this HTML version using MathJax in order to improve their display. Uncheck the box to turn MathJax off. This feature requires Javascript. Click on a formula to zoom.

?Mathematical formulae have been encoded as MathML and are displayed in this HTML version using MathJax in order to improve their display. Uncheck the box to turn MathJax off. This feature requires Javascript. Click on a formula to zoom.Abstract

Orthogonal matching pursuit (OMP) algorithm is a classical greedy algorithm widely used in compressed sensing. In this paper, by exploiting the Wielandt inequality and some properties of orthogonal projection matrix, we obtained a new number of iterations required for the OMP algorithm to perform exact recovery of sparse signals, which improves significantly upon the latest results as we know.

1. Introduction

Orthogonal matching pursuit (OMP) has received growing attention due to its simplicity and competitive reconstruction performance recently. Consider the following compressed linear model:

(1)

(1)

where

is a K-sparse signal (i.e.,

),

is a known measurement matrix with

and

is the observation signal. It has been demonstrated that under some appropriate conditions on Φ, OMP can reliably recover the signal x based on a set of compressive observations y by iteratively identifying the support of the sparse signal according to the maximum correlation between columns of measurement matrix and the current residual. See Table for a detailed description of the OMP algorithm (Cai & Wang, Citation2011; Chang & Wu, Citation2014; Tropp & Gilbert, Citation2007; Wang & Shim, Citation2016; Wen et al., Citation2020, Citation2017; Wu et al., Citation2013). In Table ,

is the set of nonzero positions in x.

denotes the residual after the kth iteration of OMP and

the estimated support set within kth iteration of OMP.

Table 1. Orthogonal matching pursuit.

In compressed sensing, a commonly used framework for analysing the recovery performance is the restricted isometry property (RIP) (Cai et al., Citation2010; Candes & Tao, Citation2005; Chang & Wu, Citation2014). A matrix Φ is said to satisfy the RIP of order K if there exists a constant such that

(2)

(2)

for all K-sparse signal x. In particular, the minimum of all constants δ satisfying (Equation2

(2)

(2) ) is called the K-order Restricted Isometry Constant (RIC) and denoted by

. Over the years, many RIP-based conditions have been proposed to guarantee exact recovery of any K-sparse signals via OMP in K iterations. It has been shown in Davenport and Wakin (Citation2010) that

is sufficient for OMP to recover any K-sparse signals x in K iterations. The sufficient reconstruction condition of OMP is then improved to

by Huang and Zhu (Citation2011). Mo (Citation2015) demonstrated that

is a sharp condition for exact recovery of any K-sparse signal with OMP in K iterations. Our recent work Liu et al. (Citation2017) provides some sufficient conditions for recovering restricted classes of K-sparse signals with a more relaxed bound on RIC.

Obviously, running fewer number of iterations of OMP offers many benefits and many efforts have been made to improve the condition (Cai et al., Citation2009; Chang & Wu, Citation2014; Li & Wen, Citation2019; Wen et al., Citation2017; Wu et al., Citation2013). In Livshitz (Citation2012), Livshitz showed that with proper choices of α and β (), OMP accurately reconstructs K-sparse signals in

iterations under

. It has been shown by Zhang (Citation2011) that OMP recovers any K-sparse signal in

iterations under

. Livshitz and Temlyakov (Citation2014) considered random sparse signals and showed that with high probability, these signals can be recovered within

iterations of OMP for any

. Recently, Wang and Shim (Citation2016) showed that if

(3)

(3)

and

, OMP can recover the K-sparse signals in

iterations. It is the best result as we know in the literature.

In this paper, we present a new result on how many iterations of OMP would be enough to guarantee exact recovery of sparse signals:

which improves significantly upon the results proposed in Wang and Shim (Citation2016).

We first give some notation. Let and

.

and

denote floor and ceiling function, respectively. For any two sets Λ and Γ, let

, and

is the cardinality of Λ. For

and

,

denotes the submatrix of Φ that contains only the columns indexed by Λ and

denotes the subvector of x that contains only the entries indexed by Λ, and

represents the span of columns in

. Let

stand for an orthogonal projection matrix onto

, where

is the conjugate transpose of the matrix Φ, and

is Moore–Penrose pseudo inverse of

.

is an orthogonal projection matrix onto the orthogonal complement of

, where

denotes the identity matrix. In particular, if

, then

is a 0-by-1 empty vector,

is an m-by-0 empty matrix,

is an m-by-1 zero matrix and

. For further details on empty matrices, see, e.g., Bernstein (Citation2005).

2. Main results

For notational simplicity, we denote .

Theorem 2.1

For any , let

and

. If the measurement matrix Φ in (Equation1

(1)

(1) ) satisfies the RIP of order

and

then

.

Remark 2.1

Performance of Theorem 2.1

From Theorem 2.1, if

(4)

(4)

and

, then OMP perfectly recovers the signal x from the measurements

in

iteration. In the following, we compare the lower bound in (Equation4

(4)

(4) ) with the result of Wang and Shim (Citation2016), which has been showed in (Equation3

(3)

(3) ). We first establish an upper bound for the ratio of (Equation4

(4)

(4) ) to (Equation3

(3)

(3) ) by using monotonicity property of the RIC (

) as

It is easy to check that

for

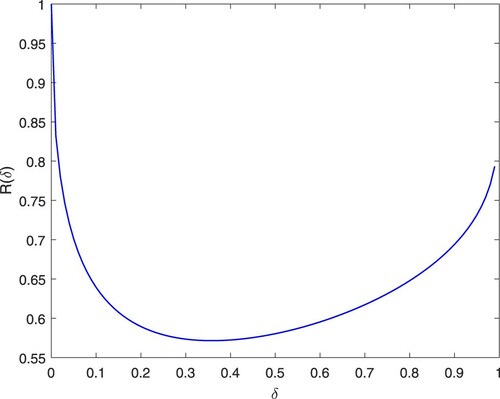

, which means the lower bound of c in this paper is uniformly smaller than the one proposed in Wang and Shim (Citation2016). See Figure , for example, we have

and

. For

, we have

. That is, nearly 98% of the values of the bounds proposed in this paper is less than 0.8 times of the one in (Equation3

(3)

(3) ).

Remark 2.2

Main differences with the previous work

Our methods have several key distinctions in construction of the more efficient lower and upper bounds of resident in OMP. First, Wang and Shim (Citation2016) obtained the upper bound of residual in OMP algorithm based on the following fundamental set:

We modified the above set to

which leads to a more efficient upper bound of resident in OMP algorithm (see inequality (Equation17

(17)

(17) ) of Section 3.3). Second, Livshitz and Temlyakov (Citation2014), Wang and Shim (Citation2016), and Zhang (Citation2011) only use RIP to obtain the lower bound of resident. However, in this paper, we not only used RIP, but also utilized the Wielandt inequality (Wang & Ip, Citation1999) to derive more efficient lower bound of resident (see inequality (Equation16

(16)

(16) ) of Section 3.3). The details can be found in the following sections.

3. Proof of the main theorem

3.1. Preliminaries

In the following, we introduce the subset of

based on the magnitude of elements in the set

. For

and

, let

(5)

(5)

(6)

(6)

It is clear that

and

with

.

Definition 3.1

If subset in

satisfies: (a)

and (b)

, then

is said to be the

characteristic set of x.

It is easy to verify that the characteristic set has the following properties:

, for

;

If

and

, then

;

and

, with

In fact, (P.1) is obvious as . For (P.2), since

, Γ can be rewritten as

. From (Equation6

(6)

(6) ) and

, we know that if

, then

. Hence

. That is

For (P.3), if

, it is obvious that

based on Definition 3.1. If

, then

From (P.2),

Thus

. From (P.1),

Otherwise, it is easy to verify that

for

. Thus we have

based on Definition 3.1.

From the fact , and (P.3), there exists

such that

, and

. Then it follows from (P.3) that

.

Let , thus

. It can be verified that there exists the maximum value of

over

. Since

based on the fact that

from (P.3) for

. Hence, we give the following definition.

Definition 3.2

Let , integer

is said to be the

character of x if it satisfies the following condition:

By Definition 3.2, it is easy to check that

and

(7)

(7)

Recalling that

for

and

, we have

. Thus

(8)

(8)

Based on

character

, we define the following integer sequence

as

(9)

(9)

Obviously, the sequence

is monotonically non-decreasing, i.e.,

3.2. Main idea

Inspired by Wang and Shim (Citation2016) and Zhang (Citation2011), we show that after k iterations, OMP can select a substantial amount of indices in for a specified number of additional iterations, and the rate of the number of additional iterations to the number of chosen indices is upper bounded by the constant α. More precisely, we give the following important proposition, which is the basis of Theorem 2.1.

Proposition 3.1

Suppose , and

, then we have

(10)

(10)

where

is the

character of x.

Now, we can prove Theorem 2.1 based on Proposition 3.1. The proof can be divided into two steps.

Step 1: We first prove that

(11)

(11)

Obviously, (Equation11

(11)

(11) ) holds when i = 0 since

. In the following, we assume that

. If

, then

from

. It follows that

If

, by substituting

into (Equation10

(10)

(10) ), we have

Thus

This completes the proof of (Equation11

(11)

(11) ).

Step 2: We prove that there exists a constant such that

. On one hand, from (Equation11

(11)

(11) ) we have

(12)

(12)

Thus

is a monotonically non-decreasing and bounded integer sequence. On the other hand, it is clear that

. Then from (Equation9

(9)

(9) ), one can easily show that

for

. Therefore, there must exist a constant

such that

. From (Equation12

(12)

(12) ), we have

, then

. Hence

, which completes the proof.

3.3. Sketch of proof of Proposition 3.1

We here give a sketch of the proof of Proposition 3.1, the remaining details of (Equation16(16)

(16) ) and (Equation17

(17)

(17) ) can be found in the Appendix. For notational simplicity, let

. From

, and (Equation8

(8)

(8) ), we have

(13)

(13)

and (Equation10

(10)

(10) ) can be rewritten as

(14)

(14)

By (P.2), a sufficient condition of the above inequality is

(15)

(15)

Now, what remains is the proof of (Equation15

(15)

(15) ), which is based on the analysis of the residual of OMP. First, by exploiting Wielandt inequality and RIP, we construct a lower bound for

, that is,

(16)

(16)

Next, by exploiting some properties of orthogonal projection matrix and RIP, we construct an upper bound for

,

(17)

(17)

From (Equation16

(16)

(16) ) and (Equation17

(17)

(17) ), it is easy to verify (Equation15

(15)

(15) ) holds. Hence the remains is the proof of (Equation16

(16)

(16) ) and (Equation17

(17)

(17) ) (see Appendices).

4. Conclusion

In this paper, we analyse the number of iterations required for OMP to exactly recover sparse signals. Our analysis shows that OMP can recover any K-sparse signals in iterations (

), which is uniformly smaller than the one proposed in Wang and Shim (Citation2016). For example, to accurately recover K-sparse signals, it has been shown in Wang and Shim (Citation2016) that OMP requires

iterations with

while our result shows that OMP requires only

iterations with

. In practical application, a large number of iterations often lead to a high computational complexity. Our result provides a theoretical basis for the reduction of the number of iterations required for OMP.

Disclosure statement

No potential conflict of interest was reported by the author(s).

Additional information

Funding

References

- Bernstein, D. S. (2005). Matrix mathematics: Theory, facts, and formulas with application to linear systems theory. Princeton University Press.

- Cai, T., & Wang, L. (2011). Orthogonal matching pursuit for sparse signal recovery with noise. IEEE Transactions on Information Theory, 57(7), 4680–4688. https://doi.org/10.1109/TIT.2011.2146090

- Cai, T., Wang, L., & Xu, G. (2010). New bounds for restricted isometry constants. IEEE Transactions on Information Theory, 56(9), 4388–4394. https://doi.org/10.1109/TIT.2010.2054730

- Cai, T., Xu, G., & Zhang, J. (2009). On recovery of sparse signals via l1 minimization. IEEE Transactions on Information Theory, 55(7), 3388–3397. https://doi.org/10.1109/TIT.2009.2021377

- Candes, E. J., & Tao, T. (2005). Decoding by linear programming. IEEE Transactions on Information Theory, 51(12), 4203–4215. https://doi.org/10.1109/TIT.2005.858979

- Chang, L. H., & Wu, J. Y. (2014). An improved RIP-based performance guarantee for sparse signal recovery via orthogonal matching pursuit. IEEE Transactions on Information Theory, 60(9), 405–408. https://doi.org/10.1109/TIT.18

- Davenport, M. A., & Wakin, M. B. (2010). Analysis of orthogonal matching pursuit using the restricted isometry property. IEEE Transactions on Information Theory, 56(9), 4395–4401. https://doi.org/10.1109/TIT.2010.2054653

- Huang, S., & Zhu, J. (2011). Recovery of sparse signals using OMP and its variants: convergence analysis based on RIP. Inverse Problems, 27(3), 035003. https://doi.org/10.1088/0266-5611/27/3/035003

- Li, H. F., & Wen, J. M. (2019). A new analysis for support recovery with block orthogonal matching pursuit. IEEE Signal Processing Letters, 26(2), 247–251. https://doi.org/10.1109/LSP.2018.2885919

- Liu, C., Fang, Y., & Liu, J. Z. (2017). Some new results about sufficient conditions for exact support recovery of sparse signals via orthogonal matching pursuit. IEEE Transactions on Signal Processing, 65(17), 4511–4524. https://doi.org/10.1109/TSP.2017.2711543

- Livshitz, E. D. (2012). On the efficiency of the orthogonal matching pursuit in compressed sensing. Sbornik Mathematics, 203(2), 33–44. https://doi.org/10.4213/sm

- Livshitz, E. D., & Temlyakov, V. N. (2014). Sparse approximation and recovery by greedy algorithms. IEEE Transactions on Information Theory, 60(7), 3989–4000. https://doi.org/10.1109/TIT.2014.2320932

- Mo, Q. (2015). A sharp restricted isometry constant bound of orthogonal matching pursuit. arXiv: 1501.01708 [Online]. Available: http://arxiv.org/pdf/1501.01708v1.pdf.

- Tropp, J. A., & Gilbert, A. C. (2007). Signal recovery from random measurements via orthogonal matching pursuit. IEEE Transactions on Information Theory, 53(12), 4655–4666. https://doi.org/10.1109/TIT.2007.909108

- Wang, J., & Shim, B. (2016). Exact recovery of sparse signals using orthogonal matching pursuit: How many iterations do we need. IEEE Transactions on Signal Processing, 64(16), 4194–4202. https://doi.org/10.1109/TSP.2016.2568162

- Wang, S. G., & Ip, W. -C. (1999). A matrix version of the Wielandt inequality and its application to statistics. Linear Algebra Its Appl, 2, 118–120. https://doi.org/10.1016/S0024-3795(99)00117-2

- Wen, J., Zhang, R., & Yu, W. (2020). Signal-dependent performance analysis of orthogonal matching pursuit for exact sparse recovery. IEEE Transactions on Signal Processing, 68, 5031–5046. https://doi.org/10.1109/TSP.78

- Wen, J., Zhou, Z., Wang, J., Tang, X., & Mo, Q. (2017). A sharp condition for exact support recovery with orthogonal matching pursuit. IEEE Transactions on Signal Processing, 65(6), 1370–1382. https://doi.org/10.1109/TSP.2016.2634550

- Wu, R., Huang, W., & Chen, D.-R. (2013). The exact support recovery of sparse signals with noise via orthogonal matching pursuit. IEEE Signal Processing Letters, 20(4), 403–406. https://doi.org/10.1109/LSP.2012.2233734

- Zhang, T. (2011). Sparse recovery with orthogonal matching pursuit under RIP. IEEE Transactions on Information Theory, 57(9), 6215–6221. https://doi.org/10.1109/TIT.2011.2162263

Appendices

Appendix 1. Proof of (16)

The proof of (Equation16(16)

(16) ) is based on the Wielandt inequality (Wang & Ip, Citation1999), which is presented in the following Lemma.

Lemma A.1

Wielandt inequality

Let be an n-order positive-definite matrix with

Then we have

(A1)

(A1)

We now proceed to the proof of (Equation16(16)

(16) ). The conclusion holds naturally if

In the following, we prove that (Equation16

(16)

(16) ) still holds under

. Without loss of generality, we assume that

, and

, where

. Notice that

(A2)

(A2)

By (Equation13

(13)

(13) ), we have

. Furthermore, from the monotonicity of the RIC and

, we have

It implies that

(A3)

(A3)

Since (EquationA2

(A2)

(A2) ), (EquationA3

(A3)

(A3) ), and Lemma A.1, we have

(A4)

(A4)

Hence by (EquationA4

(A4)

(A4) ), it follows that

This completes the proof.

Appendix 2. Proof of (17)

The proof of (Equation17(17)

(17) ) is based on the following Lemma A.2, which generalized the results of Wang and Shim (Citation2016) to the complex field.

Lemma A.2

Suppose , and Γ be a nonempty subset of

, the residual of OMP satisfies

(A5)

(A5)

where

for any integer

.

Proof.

Since , it follows that

. Notice that

. Thus if

or

, then Lemma A.2 holds evidently. In the following, we assume that

and

(A6)

(A6)

It implies that

(A7)

(A7)

We later proved that the residual of OMP satisfies

(A8)

(A8)

for

Then (EquationA5

(A5)

(A5) ) can be obtained by (EquationA8

(A8)

(A8) ) as follows,

(EquationA8

(A8)

(A8) ) can be proved as follows. It is easy to check that

. Thus

Furthermore,

(A9)

(A9)

where (a), (b), (c), (d) above can be obtained as follows: (a) is from that fact that

(b) is due to

(c) is because

and

(d) is followed from the definition of RIP.

Now we construct a lower bound for below.

Notice that for

and

. Thus we have

(A10)

(A10)

From (EquationA10

(A10)

(A10) ), it follows that

(A11)

(A11)

Notice that

. From (EquationA11

(A11)

(A11) ), we have

(A12)

(A12)

Hence by (EquationA7

(A7)

(A7) ) and (EquationA12

(A12)

(A12) ), we have

(A13)

(A13)

and

.

Now we prove

(A14)

(A14)

In fact, if

then

and

. From RIP, we have

For

we have

Then from (EquationA12

(A12)

(A12) ), (EquationA14

(A14)

(A14) ) and the arithmetic-geometric mean inequality, it can be verified that

(A15)

(A15)

On the other hand, we have

for

. Thus

(A16)

(A16)

Notice that

. Combining (EquationA15

(A15)

(A15) ) and (EquationA16

(A16)

(A16) ), we obtain

(A17)

(A17)

This, together with (EquationA9

(A9)

(A9) ), implies

(A18)

(A18)

Hence (EquationA18

(A18)

(A18) ) can be rewritten as

(A19)

(A19)

Let

, then

. From monotonicity of the RIC, we can obtain that

for

. Thus from (EquationA19

(A19)

(A19) ), we have

for

. This completes the proof of (EquationA8

(A8)

(A8) ).

We now proceed to the proof of (Equation17(17)

(17) ). Let

and

Let

and

in (EquationA5

(A5)

(A5) ). Notice that

Then it follows that

(A20)

(A20)

where

In the following, we construct an upper bound for

. Recall that

. Then from Appendix 2 in Wang and Shim (Citation2016), we have

(A21)

(A21)

By (P.1) and (EquationA21

(A21)

(A21) ), it follows that

(A22)

(A22)

Notice that

and

for

. From (Equation13

(13)

(13) ) and (EquationA22

(A22)

(A22) ), we obtain

Furthermore, from monotonicity of the RIC and

, we have

(A23)

(A23)

Hence,

(A24)

(A24)

Together with (EquationA20(A20)

(A20) ), it implies that

(A25)

(A25)

for

Note that we only need to consider

since if

, (EquationA25

(A25)

(A25) ) holds from

By substituting (Equation7

(7)

(7) ) into (EquationA25

(A25)

(A25) ), we further obtain

(A26)

(A26)

From

and (Equation7

(7)

(7) ), we have

(A27)

(A27)

Notice that

and

, Then by (EquationA22

(A22)

(A22) ), (EquationA26

(A26)

(A26) ), (EquationA27

(A27)

(A27) ), we obtain

Thus (Equation17

(17)

(17) ) holds. This completes the proof.