?Mathematical formulae have been encoded as MathML and are displayed in this HTML version using MathJax in order to improve their display. Uncheck the box to turn MathJax off. This feature requires Javascript. Click on a formula to zoom.

?Mathematical formulae have been encoded as MathML and are displayed in this HTML version using MathJax in order to improve their display. Uncheck the box to turn MathJax off. This feature requires Javascript. Click on a formula to zoom.ABSTRACT

A family of three-step optimal eighth-order iterative algorithm is developed in this paper in order to find single roots of nonlinear equations using the weight function technique. The newly proposed iterative methods of eight order convergence need three function evaluations and one first derivative evaluation that satisfies the Kung–Traub optimality conjecture in terms of computational cost per iteration (i.e.). Furthermore, using the primary theorem that establishes the convergence order, the theoretical convergence properties of our schemes are thoroughly investigated. On several engineering applications, the performance and efficiency of our optimal iteration algorithms are examined to those of existing competitors. The new iterative schemes are more efficient than the existing methods in the literature, as illustrated by the basins of attraction, dynamical planes, efficiency, log of residual, and numerical test examples.

1 Introduction

The earliest problem in science, and primarily in mathematics, is finding the roots of nonlinear equation

(1)

(1) Nonlinear equations are used in a broad variety of fields in science and engineering [Citation1–3]. When real problems exist, such as weather forecasting, the correct placement of satellite systems in desired trajectories, earthquake magnitude estimation, and other advanced technical issues, only approximate techniques are frequently developed. Only in rare situations is it possible to solve the governing equations exactly. To determine the roots of nonlinear equation (1), several iterative approaches have been explored such as the Newton–Raphson method, decomposition methods, homotopy analysis method, and various modified forms of the Newton–Raphson method. The Newton–Raphson method [Citation4] iterative scheme is the most well-known single root finding method:

(2)

(2)

The method described by (2) has optimum convergence order 2 with efficiency Several higher order iterative algorithms for solving nonlinear equations have already been developed and researched in the past decade, namely Newton’s method, Chebyshev method, Halley’s iteration technique, and others see e.g. Wang et al. [Citation6] present Modified Ostrowski’s method of eight-order convergence, Li et al. [Citation7] proposed a higher order iterative method, Kung et al. [Citation20] presented optimal order of one point and multipoint iterative schemes, Chicharro, et al. [Citation28] illustrate the stability and applicability of iterative methods with memory, Zafar et al. [Citation29] proposed efficient family of the iterative method of eight order convergence, Tao et al. [Citation30] used divide difference techniques to construct optimal fourth- and eighth-order convergent scheme and Shacham et al. [Citation31] proposed higher order improved memory methods for finding roots of non-linear equations. As the order of convergence increases, the number of function evaluations per iteration increases. As a result, a new performance criterion has emerged: efficiency, which indicates that any multi-point technique without memory that performs n function evaluations cannot have an order of convergence higher than 2

, which is the optimum order. Thus, for three evaluations per iteration, the optimal order is four, for four evaluations per iteration, eight, and so on. As a consequence, the overall aim of this study is to develop more efficient iterative schemes in comparison with existing methods in the literature. We developed a new iterative method to increase the order of convergence without increasing the functional evaluations. We can easily acquire the optimal order; as we will see, the obtained three-step method is of eighth-order convergence and takes four evaluations of the function

. The efficiency of an iterative method is commonly analysed in the literature using the efficiency index

, where

is the order of convergence and

is the total number of functional evaluations or its derivative evaluations in each iteration. As a result, this technique has an efficiency index of

, indicating that it is the first to achieve the optimal order of convergence according to the Kung–Traub conjecture [Citation20].

The weight function technique motivates us to propose a family of the eighth-order numerical iterative scheme for finding roots of (1). In [Citation10–13,Citation21–24], which use a variety of weight functions, how weight function processes can be applied is given. In this article, we employ the weight functions technique to construct a family of iterative algorithms with a high convergence order and efficiency index. Each iteration requires the evaluation of three functions and one first order derivative in terms of computational cost.

The conditions of Theorem 1.1 presented in this article are broad and fundamental for constructing an iterative technique for finding roots of nonlinear equations. Selecting functions that satisfy the theorem’s conditions is all that is required for a certain iterative technique. For example, one may write out the desired functions first, then use the theorem’s criterion to calculate the coefficients in the functions. Specific iterative techniques are illustrated in subsection (Concrete’s eight-order methods). The method’s attractiveness is because the developed scheme is directly applicable and requires the minimum number of functional evaluations available. Using a complex dynamical system, we choose the parameter values in the numerical scheme that yield the highest convergence rate. In terms of basins of attraction, we may compare our newly developed algorithms to a number of existing iterative techniques with the same convergence order and computational cost.

Here, we discuss some optimal eighth-order iterative methods for finding roots of nonlinear equations that exist in the literature.

The optimal eighth-order iterative method (abbreviated as MM1) due to Kung–Traub et al. [Citation20] based on the Hermite interpolation is given by

(3)

(3) where

The following optimal eighth-order iterative approach was introduced by Babajee et al. [Citation15] (abbreviated as MM2)

(4)

(4) where

,

,

The optimal eighth-order iterative technique (abbreviated as MM3) given by Cordero et al. [Citation16] is as follows:

(5)

(5) where

and

.

Sharm’s family [Citation17] of the optimal eighth-order method (abbreviated as MM4) is

(6)

(6) where

and

.

Thukral [Citation18] presented the following optimal eighth-order iterative method (abbreviated as MM5):

(7)

(7) where

,

,

.

Wang and Liu [Citation19] presented Hermite interpolation based optimal eighth-order iterative method (abbreviated as MM6):

(8)

(8) where

.

Liu and Wang’s [Citation33] presented the following optimal eighth-order iterative method (abbreviated as MM7):

(9)

(9) where

and α1, α2R.

Here, we proposed the following family of optimal eighth order methods:

(10)

(10) where

and

are real-valued functions to be determined later on. For the iteration scheme (10), we have the following convergence theorem [Citation11]:

Theorem 1.1:

Let be a simple root of a sufficiently differentiable function

in an open interval I. If

is sufficiently close to

and

be a real-valued function satisfying

,

and

then the convergence order of the family of iterative method (10) is eight and the error equation is given by

(11)

(11) where

Proof:

Let be a simple root of

and

By Taylor’s series about

taking

we get:

(12)

(12) and

(13)

(13) Dividing (12) by (13), we have

(14)

(14) Using (14) in the first step of (10), we have:

(15)

(15) Thus, using Taylor series, we have:

(16)

(16) Dividing (16) by (13), we have:

(17)

(17)

(18)

(18) This gives

(19)

(19) Multiplying (19) and (17), we have:

(20)

(20) This implies,

(21)

(21)

(22)

(22)

(23)

(23)

(24)

(24)

(25)

(25) By Taylor series, we obtain:

(26)

(26)

(27)

(27)

(28)

(28) This implies

(29)

(29)

(30)

(30)

(31)

(31)

(32)

(32)

(33)

(33) Now putting

and

in (33), we have:

(34)

(34) Hence the result is proved.

1.1 Concrete's eight-order methods

We develop some concrete forms of iterative methods described by algorithm (10) in this section. Let us take the function where

and

,

,

satisfying the condition

and

of Theorem 1.1 and choose

Therefore, we get following five new three-step iterative methods of order eight:

Method-1 (S1): Choosing and

satisfying the conditions

,

and

of Theorem 1.1. Thus, we have the following iterative scheme as

(35)

(35) where

.

Method-2 (S2): Choosing and

where

satisfying the conditions

,

and

of Theorem 1.1. Thus, we have the following iterative scheme as

(36)

(36) where

Method-3 (S3): Choosing and

satisfying the conditions

,

and

of Theorem 1.1. Thus, we have the following iterative scheme as follows:

(37)

(37) where

Method-4 (S4): Choosing and

where

satisfying the condition

,

and

of Theorem 1.1. Thus, we have the following iterative scheme as

(38)

(38) where

.

Method-5 (S5): Choosing and

satisfying the condition

,

and

of Theorem 1.1. Thus, we have the following iterative scheme as

(39)

(39)

where

.

2 Dynamical analysis

In this study, we characterize the stability of all fixed points and investigate the behaviour of these various approaches when applied to quadratic polynomials. The numerical iterative method for finding the roots of nonlinear equations generates the following rational map is given as

(40)

(40) where

The dynamical theories of complex dynamics that we apply in this work will now be reviewed (see [Citation28,Citation32]). The orbit of a point is defined as follows

given a rational function

, where

is the Riemann sphere. A point

is called fixed point if

A periodic point z is a point such that

and

for

In particular, a fixed point is attractor if

superattractor if

repulsor if

and parabolic if

Therefore, the super attracting fixed point is also known as the critical point. An attracting point

is defined on the basis of attraction,

, as the set of starting points whose orbit tends to

The scaling theorem enables an appropriate modification of the coordinate to reduce dynamics of iteration of general maps and examines a particular family of iterations of similar maps. As all of the numerical techniques S1–S5 and MM1–MM5 satisfy the scaling theorem and allows dynamics studies.

Theorem 2.1:

Rational map arsis from iterative methods S1 applied to an arbitrary quadratic polynomial where

is conjugate via Möbius transformation by

as

(41)

(41) where

Proof:

Let where

Möbius transformation is

(42)

(42) and inverse Möbius transformation is given as

(43)

(43) which is considered as a map

Then, we have

(44)

(44) where

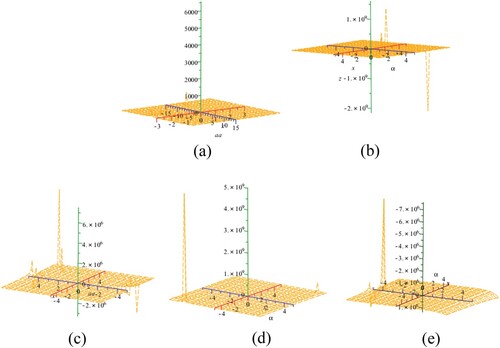

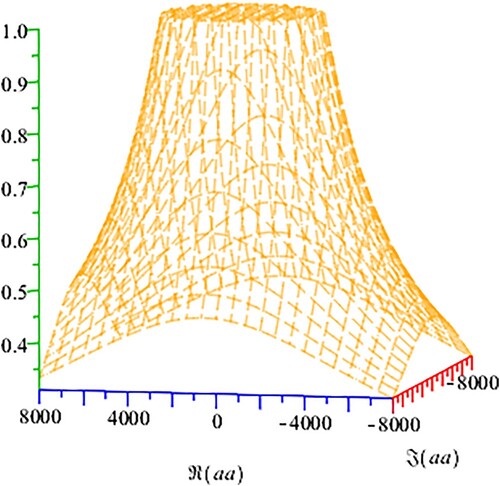

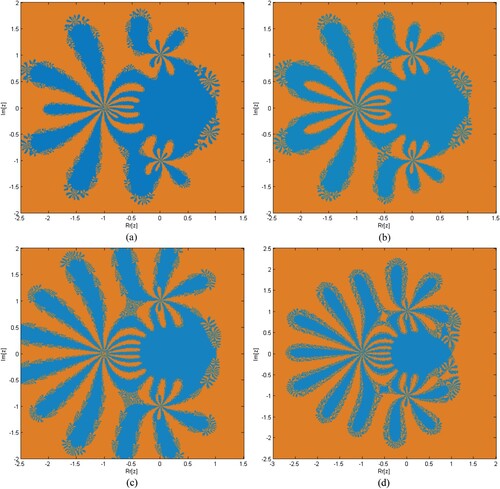

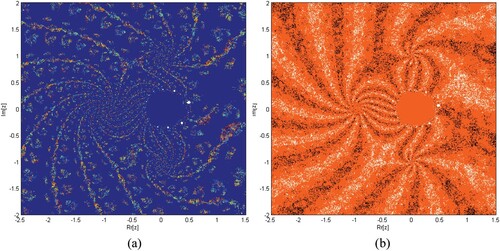

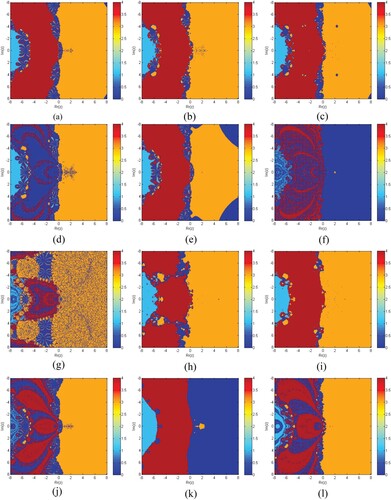

Similarly, we prove theorem for S2–S5 and MM1–MM7. The unions of the associated stability functions of all the strange fixed points of rational maps (S1–S5) are shown in Figure (a)-1 (41) (Figures ).

Figure 3. (a–d) depicts the dynamical planes corresponding to the stable behaviour of iterative method S1 for various values of respectively.

Figure 4. (a,b) depicts the dynamical planes corresponding to the unstable behaviour of iterative method S1 for various values of .

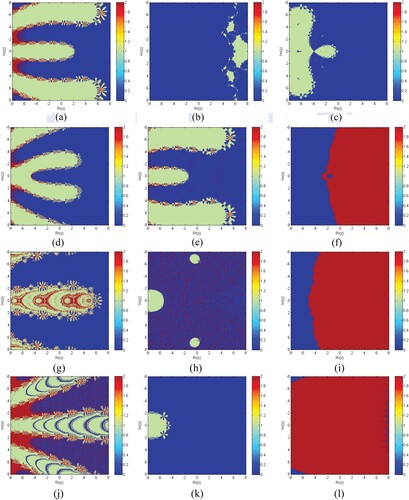

Figure 5. (a-l) depicts the dynamical planes of iterative methods S1–S5, MM1–MM5 for , respectively.

The fixed point of for rational map are

,

and

. For the stability of fixed point of iterative maps

we compute

.

The fixed points and

are always super attractive, as seen in (1d) but the stability of other fixed points depends on the value of the parameter

, which is present in this case.

For , the operator

yields

(45)

(45) The stability of the strange fixed point for

is illustrated in the next result.

Theorem 2.2:

The character of the strange point is as follows.

if

then

is an attractor and it can be super attractor for

when

,

is a parabolic point.

if

and

then

is a repulsive.

Proof:

It is easy to prove

(46)

(46) So,

(47)

(47)

Let us consider an arbitrary complex number.

Then

(48)

(48) therefore

Finally, if

varies, then

then

and

is a repulsive, except if

for which

is not a fixed point

The functions where we examine stability of iterative method S1 are given as

(49)

(49) Dynamical Planes: Parametric planes are obtained by taking

over a mesh of

values in complex plane in

.

Taking

as a tolerance used as a stopping criterion. If the technique converges to either of the roots (0 and 1), the complex value of the parameter in the complex plane is painted in various colours, and black in all other cases.

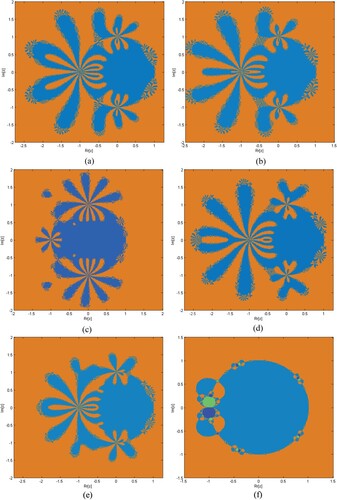

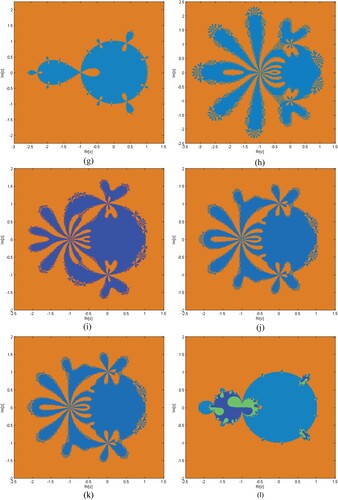

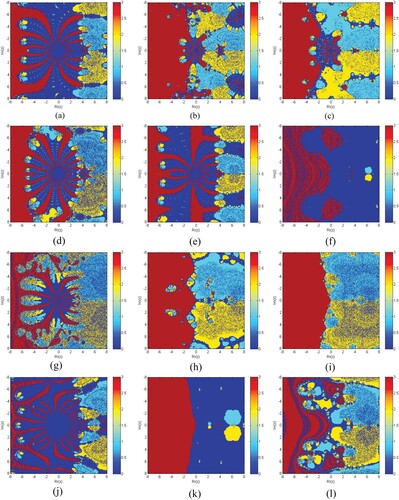

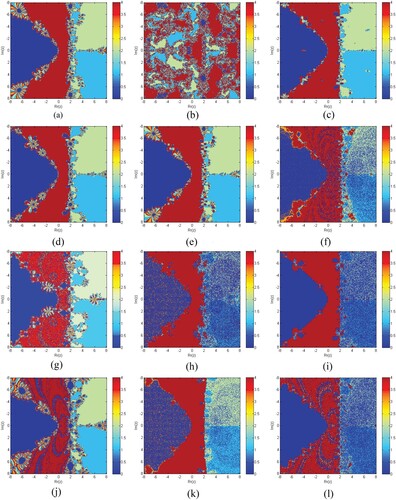

Basins of Attraction: To provoke the basins of attraction of iterative schemes S1–S5, MM1–MM5 for determining the roots of the nonlinear equations, we execute the real and imaginary parts of the starting approximations represented as two axes over a mesh of in complex plane. Use

as a stopping criterion and consider maximum 20 iterations. We allow different colours to mark to which root the iterative scheme converges and black in other cases. Colour brightness in basins shows a less number of iterations.

Table clearly shows that the elapsed time of S1–S5 is better than MM1–MM7 for generating the basins of attraction of , respectively. Elapsed time and brightness in colour of Figures (a–f) show dominance in efficiency of our methods S1–S5 as compared to MM1–MM7, respectively.

Figure 6. (a–l) show basins of attraction for methods S1–S5 and MM1–MM7, respectively, for function .

Figure 7. (a–l) show basin of attraction for methods S1–S5 and MM1–MM7, respectively, for function .

Figure 8. (a–l) show basins of attraction for methods S1–S5 and MM1–MM7, respectively, for function .

Figure 9. (a–l) show basins of attraction for methods S1–S5 and MM1–MM7, respectively, for function .

Table 1. Elapsed time in seconds.

3 Numerical outcomes

Some numerical examples [Citation5,Citation9,Citation10,Citation18,Citation25–32] are being used to test the efficiency of our three-step eight-order single root finding methods S1–S5 compared to MM1–MM7. All computations are performed in Maple 18 with 2500 significant digits and the following termination criteria:

where

represents the absolute error of function values in

and norm-2 in

[Citation8]. We take

for single root finding method. Numerical tests examples are provided in Tables . In all tables,

represents computational order of convergence [Citation14] and CPU represents computational time in seconds.

3.1 Real-world application

In this section, we discuss some real-world applications whose solutions are approximated by our newly constructed methods S1–S5.

Table 2. Numerical comparison of S1–S5 and MM1–MM7.

Table 3. Numerical comparison of S1–S5 and MM1–MM7.

Table 4. Numerical comparison of S1–S5 and MM1–MM7.

Table 5. Numerical comparison of S1–S5 and MM1–MM7.

Table 6. Numerical comparison of S1–S5 and MM1–MM7.

Table 7. Numerical comparison of S1–S5 and MM1–MM7.

3.1.1 Solar energy system: a mechanical engineering problem

In studies of solar energy collection by focusing a field of plane mirrors on a central collector, one researcher obtained the following equation for geometrical concentration factor c:

(50)

(50)

(51)

(51) where

is the rim angle of the field. F is the fraction coverage of the field with mirrors. D is the diameter of the collector and h is the height of the collector. To find rim angle

i.e. solution of (51), we choose h = 300, c = 1200, F = 0.8, and D = 14 and choose

where

.

The corresponding results for are clearly shown in Table . The theoretical order of convergence matches the computational order of convergence. On the same number of iterations, the computation residual error of our iterative schemes S1–S5 are better than MM1–MM5.

3.1.2 Water discharge problem

Water is discharged from a reservoir through a long pipe. By neglecting the change in the level of the reservoir, the transient velocity v(t) of the water flowing from the pipe at time t is given by

(52)

(52)

(53)

(53) where h is the height of the fluid in the reservoir,

is the length of the pipe, g = 9.81 m/s

is gravity. Finding the value of h necessary to achieve a velocity of v = 4 m/s at time t = 4 sec, when

= 5 m. Using S1–S5, MM1–MM7 numerical iterative methods for calculating the exact root of (53), we start with

and obtain the following results.

The corresponding results for are clearly shown in Table . The theoretical order of convergence matches the computational order of convergence. On the same number of iterations, the computation residual error of our iterative schemes S1–S5 are better than MM1–MM5.

3.1.3 Projectile motion

Consider a projectile [Citation17,Citation18,Citation30] which is projected from a tower of height onto a hill with initial speed v with angle

Define an impact function

which depends on horizontal distance x. The path function of projectile is defined as

(54)

(54) When the projectile hits the hill there exist a value of x for which

. We have to find such a value of

which maximizes

therefore differentiating (54) with respect to

i.e.

(55)

(55) putting

we have:

(56)

(56)

(57)

(57) Taking enveloping parabola

[Citation27], we have:

(58)

(58) Differentiating (58) and setting

we have:

(59)

(59)

(60)

(60) Thus, our enveloping parabola becomes:

(61)

(61) For the solution of the parabola first we find x by taking

and

in (47), we can write:

(62)

(62) and

(63)

(63) The exact roots of (62) are

The initial estimate for is taken as

:

The corresponding results for are clearly shown in Table . The theoretical order of convergence matches the computational order of convergence. On the same number of iterations, the computation residual errors of our iterative schemes S1–S5 are better than MM1–MM5.

3.1.4 Fluid permeability in bio gels:

Specific hydraulic permeability [Citation34] relates the pressure gradient to fluid velocity in a porous medium (agarose gel or extracellular fibre matrix) and is shown in the following non-linear polynomial equations:

(64)

(64) or

(65)

(65) where

is specific hydraulic permeability,

radius of the fibre, and

is the porosity [Citation35]. Using

and

we have:

(66)

(66) The exact roots of (66) are

We choose as an estimate for determination of roots (66).

The corresponding results for are clearly shown in Table . The theoretical order of convergence matches the computational order of convergence. On the same number of iterations, the computation residual error of our iterative schemes S1–S5 are better than MM1–MM5.

3.1.5 Beam designing model [29]

Consider a problem of beam positioning [Citation25,Citation26], resulting in a nonlinear function given as

(67)

(67) The exact roots of (67) are

The initial estimates for are taken as

our required exact root is 2.

The corresponding results for are clearly shown in Table . The theoretical order of convergence matches the computational order of convergence. On the same number of iterations, the computation residual error of our iterative schemes S1–S5 are better than MM1–MM5.

3.1.6 Fractional conversion [28,29]

The following expression [Citation31,Citation32] describes the fractional conversion of nitrogen, hydrogen feed at 250 atm. and 227 K.

(68)

(68) The exact roots of (68) are

The initial estimate for is taken as

and our desired exact roots is

.

The corresponding results for are clearly shown in Table . The theoretical order of convergence matches the computational order of convergence. On the same number of iterations, the computation residual error of our iterative schemes S1–S5 are better than MM1–MM5.

4 Conclusion

A new family of optimal three-point eighth-order numerical iterative algorithms for approximating a simple root of a given nonlinear equation is developed using the weight function technique. The software CAS-Maple has been used to prove that the optimal technique is convergent with convergence order equals eight. The methods require only four function evaluations every iteration, resulting in an optimal order eight method. As a result, the Kung and Traub conjecture holds true for the new methods. The proposed schemes are tested against various existing schemes, demonstrating the superiority of the proposed methods. Six engineering application problems are solved, including projectile motion, beam designing model, and fractional conversion, with the new approaches excelling the other methods. Further research has been done on the complex plane for such methods to expose their basins of attraction for solving complex polynomials by presenting the fractal graphs that correlate to them. The numerical results of the proposed methods, as well as the elapsed time for their fractal graphs in Table , indicate that the novel methods are a feasible alternative for solving scalar nonlinear equations. Tables show the numerical results. On the third iteration, Tables clearly show that the residual error, CPU time, and local computational order of convergence of newly proposed methods S1–S5 are significantly superior to existing methods MM1–MM7.

Disclosure statement

No potential conflict of interest was reported by the author(s).

References

- Consnard M, Fraigniaud P. Finding the roots of a polynomial on an MIMD multicomputer. Parallel Comput. 1990;15(1):75–85.

- Agarwal P, Filali D, Akram M, et al. Convergence analysis of a three-step iterative algorithm for generalized set-valued mixed-ordered variational inclusion problem. Symmetry (Basel). 2021;13(3):444.

- Sunarto A, Agarwal P, Sulaiman J, et al. Iterative method for solving one-dimensional fractional mathematical physics model via quarter-sweep and PAOR. Adv Differ Equ. 2021;1:147.

- Attary M, Agarwal P. On developing an optimal Jarratt-like class for solving nonlinear equations. Ital J Pure Appl Math. 2020;43:523–530.

- Sendov B, Andereev A, Kjurkchiev N. Numerical solutions of polynomial equations. New York: Elsevier science; 1994.

- Wang X, Liu L. Modified Ostrowski’s method with eight -order convergence and high efficiency index. Appl Math Lett. 2010;23:549–554.

- Li TF, Li DS, Xu ZD, et al. New iterative methods for non-linear equations. Appl Math Comput. 2008;2:755–759.

- Kumar S, Kumar D, Sharma JR, et al. An optimal fourth order derivative-free numerical algorithm for multiple roots. Symmetry (Basel). 2020;12(6):1038.

- Mir NA, Muneer R, Jabeen I. Some families of two-step simultaneous methods for determining zeros of non-linear equations. ISRN Appl Math. 2011;2011:1–11.

- Farmer MR. Computing the zeros of polynomials using the divide and conquer approach. [dissertation]. Ph.D Thesis. Department of Computer Science and Information Systems, Birkbeck: University of London; 2014.

- Bi W, Ren H, Wu Q. Three-step iterative methods with eighth-order convergence for solving nonlinear equations. J Comput Appl Math. 2009;225:105–112.

- Chun C, Ham YM. Some sixth-order variants of Ostrowski root-finding methods. Appl Math Comput. 2007;193:389–394.

- Wang X, Liu L. Two new families of sixth-order methods for solving non-linear equations. Appl Math Comput. 2009;213(1):73–78.

- Jay LO. A note on Q-order of convergence. Bit Numer Math. 2001;41:422–429.

- Babajee DKR, Cordero A, Soleymani F, et al. On improved three-step schemes with high efficiency index and their dynamics. Numer Algor. 2014;65:153–169.

- Cordero A, Fardi M, Ghasemi M, et al. Accelerated iterative methods for finding solutions of nonlinear equations and their dynamical behavior. Calcolo. 2014;51:17–30.

- Sharma JR, Sharma R. A new family of modified Ostrowski’s methods with accelerated eighth order convergence. Numer Algor. 2010;54:445–458.

- Liu L, Wang X. Eighth-order methods with high efficiency index for solving nonlinear equations. Appl Math Comput. 2010;215:3449–3454.

- Wang X, Liu L. New eighth-order iterative methods for solving nonlinear equations. J Comput Appl Math. 2010;234:1611–1620.

- Kung HT, Traub JF. Optimal order of one-point and multipoint iteration. J Assoc Comput Mach. 1974;21(643):651.

- Shacham M. An improved memory method for the solution of a nonlinear equation. Chem Eng Sci. 1989;44:1495–1501.

- Balaji GV, Seader JD. Application of interval Newton’s method to chemical engineering problems. Reliab Comput. 1995;1:215–223.

- Babajee DKR, Madhu K. Comparing two techniques for developing higher order two-point iterative methods for solving quadratic equations. SeMA J. 2018; 76(2):1–22.

- Kantrowitz R, Neumann MM. Some real analysis behind optimization of projectile motion. Mediterr J Math. 2014;11:1081–1097.

- Keshet EL. Differential calculus for the life sciences. Vancouver, BC: University of British Columbia; 2017.

- Zachary JL. Introduction to scientific programming: computational problem solving using Maple and C. New York (NY): Springer; 2012.

- Henelsmith N. Finding the optimal launch angle. Walla Walla (WA): Whitman College; 2016.

- Chicharro FI, Cordero A, Garrido N, et al. Stability and applicability of iterative methods with memory. J Math Chem. 2019;57(5):1282–1300.

- Zafar F, Cordero A, Torregrosa JR. An efficient family of optimal eighth-order multiple root finders. Mathematics. 2018;6:310.

- Tao Y, Madhu K. Optimal fourth, eighth and sixteenth order methods by using divided difference techniques and their basins of attraction and its application. Mathematics. 2019;7:322.

- Shacham M. An improved memory method for the solution of a nonlinear equation. Chem Eng Sci. 1989;44:1495–1501.

- Argyros IK, Magreñán AA, Orcos L. Local convergence and a chemical application of derivative free root finding methods with one parameter based on interpolation. J Math Chem. 2016;54:1404–1416.

- Liu L, Wang X. Eighth-order methods with high efficiency index for solving nonlinear equations. Appl Math Comput. 2010;215:3449–3454.

- Fournier RL. Basic transport phenomena in biomedical engineering. New York (NY): Taylor & Franics; 2007.

- Saltzman WM. Drug delivery: engineering principal for drug therapy. New York (NY): Oxford University Press; 2001.