Figures & data

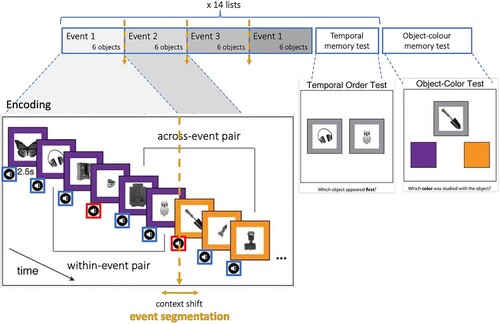

Figure 1. Schematic of experimental procedure. Encoding task with sequentially presented grey-scale objects and experimental manipulation of 2 factors: event segmentation (changes of the frame colour) and emotion (neutral or aversive sound). Temporal order memory test administered immediately after each of 14 lists. Surprise object-colour test administered at the end of the experiment, after all 14 lists of encoding and temporal order memory tests.

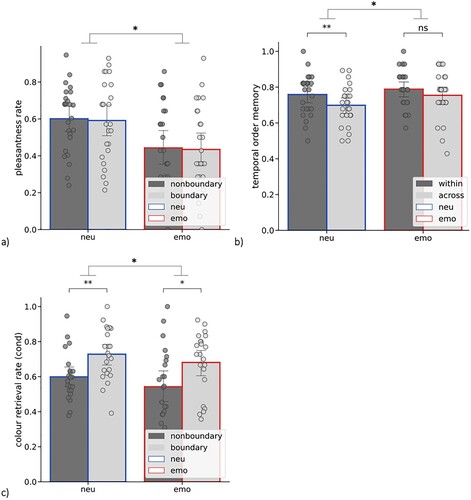

Figure 2. (a) Pleasantness rate (% of “pleasant” ratings) as a function of event segmentation (nonboundary vs. boundary) and emotion (neu – paired with a neutral sound, emo – paired with an aversive sound); (b) Temporal order memory as a function of event segmentation (within-event vs. across-event) and emotion (neu – spanning neutral sounds, emo – spanning an aversive sound); (c) Object-colour memory (for objects correctly recognized as “old” = cond) as a function of event segmentation (nonboundary vs. boundary) and emotion (neu – paired with a neutral sound, emo – paired with an aversive sound); error bars represent one SD, dots represent individual subjects’ scores; ∗ p < .05, ∗∗ p < .005.

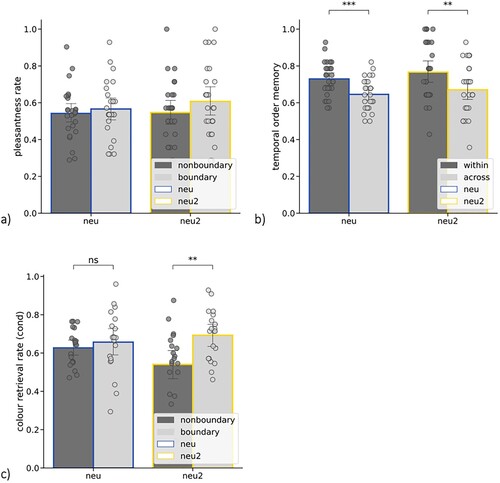

Figure 3. (a) Pleasantness rate (% of “pleasant” ratings) as a function of event segmentation (nonboundary vs. boundary) and oddballness (neu – paired with a neutral sound, neu2 – paired with an oddball neutral sound); (b) Temporal order memory as a function of event segmentation (within-event vs. across-event) and oddballness (neu – spanning neutral sounds, neu2 – spanning an oddball sound); (c) Object-colour memory (for objects correctly recognized as “old” = cond) as a function of event segmentation (nonboundary vs. boundary) and oddballness (neu – paired with a neutral sound, neu2 – paired with an oddball sound); error bars represent one SD, dots represent individual subjects’ scores; ∗∗ p < .005, ∗∗∗ p < .001.

Supplemental Material

Download TIFF Image (3.8 MB)Supplemental Material

Download TIFF Image (413 KB)Data availability statement

All data necessary to verify, interpret and extend published research will be freely available through a dedicated repository: https://osf.io/g2xer/?view_only=7417395df7ae421e88f30798e13afc8d.