Figures & data

Figure 1. Classification of images by VGG-16 net. Top row: original images from Caltech 101 dataset (Fei-Fei, Fergus, and Perona Citation2004); bottom row: the same images casted by random uniform illumination.

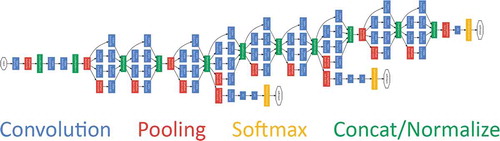

Figure 2. Schematic representation of the GoogLeNet. Credits (Szegedy et al. Citation2015).

Table 1. The results obtained on SFU Grayball dataset, and comparison with state-of-the-art methods. First two sections correspond to statistic-based and learning-based methods.

Table 2. The results obtained on reprocessed ColorChecker dataset, and comparison with state-of-the-art methods. First two sections correspond to statistic-based and learning-based methods.

Figure 4. An example of images from Grayball dataset before (left) and after (right) removing illumination color cast using the algorithm presented in this paper.

*Figures are given separately