Figures & data

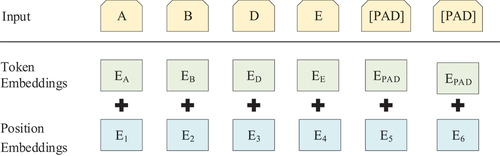

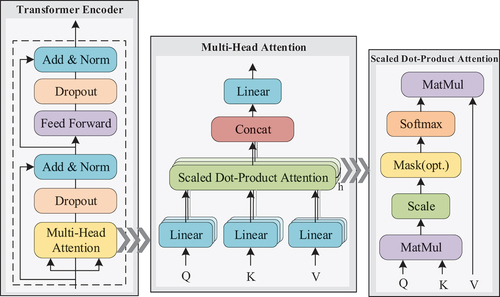

Figure 1. The model architecture of Transformer Encoder (based on(Vaswani, Shazeer, and Parmar et al. Citation2017)).

Table 1. Information of event log.

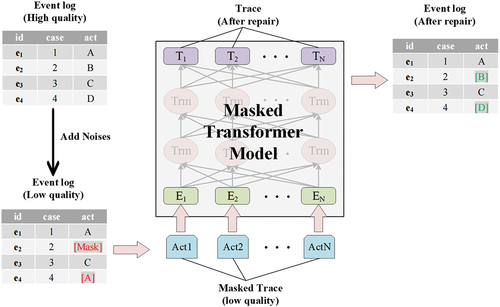

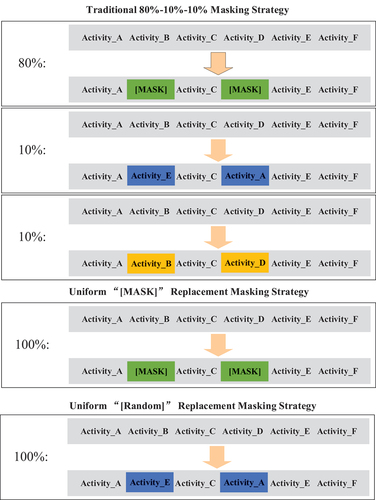

Table 2. Details of masked transformer-based event log repair method.

Table 3. Confusion matrix(Sokolova and Lapalme Citation2009).

Table 4. Evaluation metrics(Sokolova and Lapalme Citation2009).

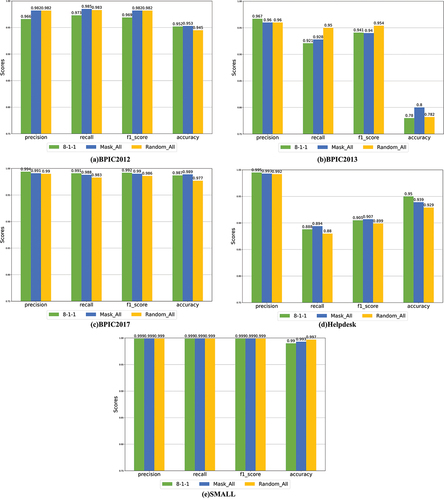

Figure 5. Performance of the model with different noise addition schemes (fixed 15% noise inclusion rate).

Table 5. Execution time reported in milliseconds(Fixed 15% noise content).

Table 6. Details of hyperparameters for the Baseline Methods(Nguyen et al. Citation2019).

Table 7. Accuracy of missing noise repair.

Table 8. Performance of the model on the BPIC2015 dataset.