Figures & data

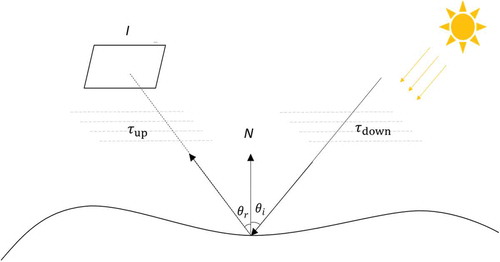

Figure 1. Concept of imaging physics. is the image,

is the normal of the object,

,

are the upwelling and downwelling transmitting process,

are the incident angle and reflection angle, respectively.

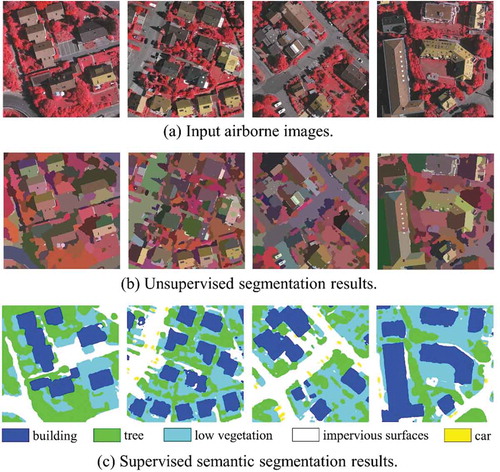

Figure 2. Examples of image segmentation of the ISPRS dataset (Gerke Citation2014).

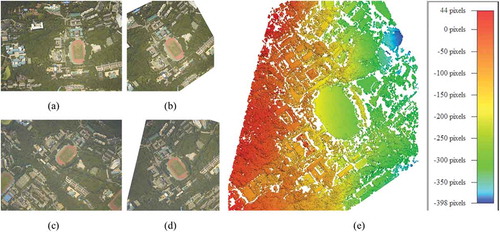

Figure 3. An example of dense matching of two images of Wuhan University campus. (a) Left input image; (b) Left epipolar image; (c) Right input image; (d) Right epipolar image; (e) Matching result (disparity map) of the left epipolar image.

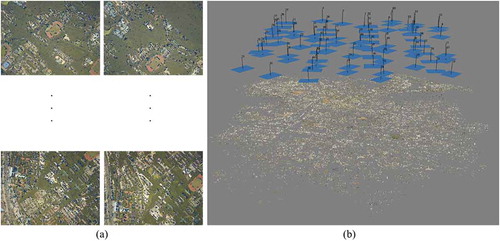

Figure 4. An example of bundle adjustment. (a) The input: original images and matched feature points; (b) The position and posture of the camera and the 3D structure of the building are recovered successfully using Agisoft MetaShape (https://www.agisoft.com).

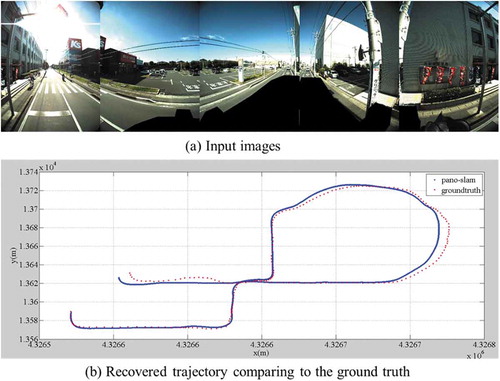

Figure 5. An example of vSLAM from a multi-camera rig (Ji et al. Citation2020).

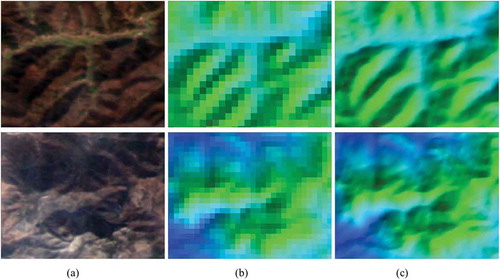

Figure 6. An example of shape from shading for surface refinement. With (a) the GaoFen-1 image, the original SRTM is refined from a resolution of (b) 90 m to (c) 15 m using shape from shading.

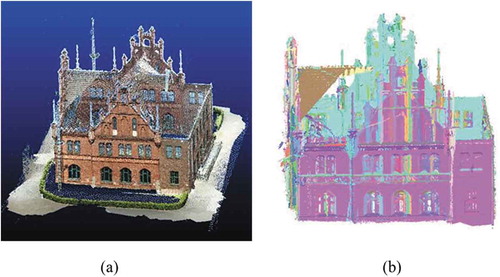

Figure 7. A segmentation example of image matching derived point cloud (Nex et al. Citation2015): (a) point cloud generated by image matching (Schonberger and Frahm Citation2016; Schönberger et al. Citation2016), (b) optimized segmentation. Building point clouds are colored by segmented planar segments.