Figures & data

Table 1 The PLGA dataset description

Table 2 Parameters setting of the respective regression models used for the feature selection and feature extraction experiments

Table 3 Experimental results for 10-CV datasets prepared with distinct random partitions of the complete dataset using feature selection technique (Identification of regression model)

Table 4 Experimental results for 10-CV datasets prepared with distinct random partitions of the complete dataset using feature extraction techniques

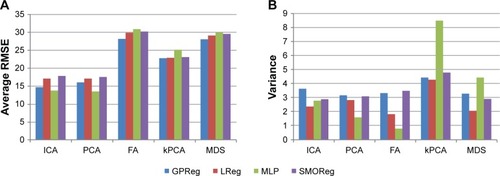

Figure 2 Results of the feature extraction experiment for the reduced dimension set of 30 features: a comparison between the regression models. a comparison using average RMSE (A); a comparison using variances (B).

Abbreviations: RMSE, root mean square error; ICA, independent component analysis; PCA, principle component analysis; FA, factor analysis; kPCA, kernel PCA; MDS, multidimensional scaling; GPReg, Gaussian process regression; LReg, linear regression; MLP, multilayer perception; SMOReg, sequential minimal optimization regression.

Table 5 A comprehensive conclusion of the results obtained from each regression model, including the ensemble techniques used