?Mathematical formulae have been encoded as MathML and are displayed in this HTML version using MathJax in order to improve their display. Uncheck the box to turn MathJax off. This feature requires Javascript. Click on a formula to zoom.

?Mathematical formulae have been encoded as MathML and are displayed in this HTML version using MathJax in order to improve their display. Uncheck the box to turn MathJax off. This feature requires Javascript. Click on a formula to zoom.ABSTRACT

The truncated Euler–Maruyama method is employed together with the Multi-level Monte Carlo method to approximate expectations of some functions of solutions to stochastic differential equations (SDEs). The convergence rate and the computational cost of the approximations are proved, when the coefficients of SDEs satisfy the local Lipschitz and Khasminskii-type conditions. Numerical examples are provided to demonstrate the theoretical results.

1. Introduction

Stochastic differential equations (SDEs) have been broadly discussed and applied as a powerful tool to capture the uncertain phenomenon in the evolution of systems in many areas [Citation2,Citation6,Citation20,Citation25,Citation26]. However, the explicit solutions of SDEs can rarely be found. Therefore, the numerical approximation becomes an essential approach in the applications of SDEs. Monographs [Citation18,Citation23] provide detailed introductions and discussions to various classic methods.

Since the nonlinear coefficients have been widely adapted in SDE models [Citation1,Citation10,Citation24], explicit numerical methods that have good convergence property for SDEs with non-global Lipschitz drift and diffusion coefficients are of interest to many researchers and required by practitioners. The authors in [Citation13] developed a quite general approach to prove the strong convergence of numerical methods for nonlinear SDEs. The approach to prove the global strong convergence via the local convergence for SDEs with non-global Lipschitz coefficients was studied in [Citation29]. More recently, the taming technique was developed to handle the non-global Lipschitz coefficients [Citation15,Citation16]. Simplified proof of the tamed Euler method and the tamed Milstein method can be found in [Citation27] and [Citation30], respectively. The truncated Euler–Maruyama (EM) method was developed in [Citation21,Citation22], which is also targeting on SDEs with non-global Lipschitz coefficients. Explicit methods for nonlinear SDEs that preserve positivity can be found in, for example [Citation12,Citation19]. A modified truncated EM method that preserves the asymptotic stability and boundedness of the nonlinear SDEs was presented in [Citation11].

Compared to the explicit methods mentioned above, the methods with implicit term have better convergence property in approximating non-global Lipschitz SDEs with the trade-off of the relatively expensive computational cost. We just mention a few of the works [Citation14,Citation28,Citation31] and the references therein.

In many situations, the expected values of some functions of the solutions to SDEs are also of interest. To estimate the expected values, the classic Monte-Carlo method is a good and natural candidate. More recently, Giles in [Citation7,Citation8] developed the Multi-level Monte Carlo (MLMC) method, which improves the convergence rate and reduces the computational cost of estimating expected values. A detailed survey of recent developments and applications of the MLMC method can be found in [Citation9]. To complement [Citation9], we only mention some new developments that are not included in [Citation9]. Under the global Lipschitz and linear growth conditions, the MLMC method combined with the EM method applied to SDEs with small noise is often found to be the most efficient option [Citation3]. The MLMC method with the adaptive EM method was designed for solving SDEs driven by Lévy process [Citation4,Citation5]. The MLMC method was applied to SDEs driven by Poisson random measures by means of coupling with the split-step implicit tau-leap at levels. However, the classic EM method with the MLMC method has been proved divergence to SDEs with non-global Lipschitz coefficients [Citation17]. So it is interesting to investigate the combinations of the MLMC method with those numerical methods developed particularly for SDEs with non-global Lipschitz coefficients. In [Citation17], the tamed Euler method was combined with the MLMC method to approximate expectations of some nonlinear functions of solutions to some nonlinear SDEs.

In this paper, we embed the MLMC method with the truncated EM method and study the convergence and the computational cost of this combination to approximate expectations of some nonlinear functions of solutions to SDEs with non-global Lipschitz coefficients.

In [Citation22], the truncated EM method has been proved to converge to the true solution with the order -

for any arbitrarily small

. The plan of this paper is as follows. Firstly, we make some modifications of Theorem 3.1 in [Citation8] such that the modified theorem is able to cover the truncated EM method. Then, we use the modified theorem to prove the convergence and the computational cost of the MLMC method with the truncated EM method. At last, numerical examples for SDEs with non-global Lipschitz coefficients and expectations of nonlinear functions are given to demonstrate the theoretical results.

This paper is constructed as follows. Notations, assumptions and some existing results about the truncated EM method and the MLMC method are presented in Section 2. Section 3 contains the main result on the computational complexity. A numerical example is provided in Section 4 to illustrate theoretical results. In the appendix, we give the proof of the theorem in Section 3.

2. Mathematical preliminary

Throughout this paper, unless otherwise specified, we let be a complete probability space with a filtration

satisfying the usual condition (that is, it is right continuous and increasing while

contains all

null sets). Let

denote the expectation corresponding to

. Let

be an m-dimensional Brownian motion defined on the space. If A is a vector or matrix, its transpose is denoted by

. If

, then

is the Euclidean norm. If A is a matrix, we let

be its trace norm. If A is a symmetric matrix, denote by

and

its largest and smallest eigenvalue, respectively. Moreover, for two real numbers a and b, set

and

. If G is a set, its indicator function is denoted by

if

and 0 otherwise.

Here we consider an SDE

(1)

(1) on

with the initial value

, where

When the coefficients obey the global Lipschitz condition, the strong convergence of numerical methods for SDEs has been well studied [Citation18]. When the coefficients μ and σ are locally Lipschitz continuous without the linear growth condition, Mao [Citation21,Citation22] recently developed the truncated EM method. To make this paper self-contained, we give a brief review of this method firstly.

We first choose a strictly increasing continuous function such that

as

and

(2)

(2) Denote by

the inverse function of ω and we see that

is a strictly increasing continuous function from

to

. We also choose a number

and a strictly decreasing function

such that

(3)

(3) For a given stepsize

, let us define the truncated functions

for

, where we set

when x=0. Moreover, let

denote the approximation to

using the truncated EM method with time step size

for

. The numerical solutions

for

are formed by setting

and computing

(4)

(4) for

where

is the Brownian motion increment.

Now we give some assumptions to guarantee that the truncated EM solution (Equation4(4)

(4) ) will converge to the true solution to the SDE (Equation1

(1)

(1) ) in the strong sense.

Assumption 2.1

The coefficients μ and σ satisfy the local Lipschitz condition that for any real number R>0, there exists a such that

(5)

(5) for all

with

.

Assumption 2.2

The coefficients μ and σ satisfy the Khasminskii-type condition that there exists a pair of constants p>2 and K>0 such that

(6)

(6) for all

.

Assumption 2.3

There exists a pair of constants and

such that

(7)

(7) for all

.

Assumption 2.4

There exists a pair of positive constants ρ and such that

(8)

(8) for all

.

Let denote a payoff function of the solution to some SDE driven by a given Brownian path

. In this paper, we need f satisfies the following assumption.

Assumption 2.5

There exists a constant c>0 such that

(9)

(9) for all

.

Using the idea in [Citation7,Citation8], the expected value of can be decomposed in the following way

(10)

(10)

Let be an estimator for

using

samples. Let

be an estimator for

using

paths such that

The multi-level method independently estimates each of the expectations on the right-hand side of Equation (Equation10

(10)

(10) ) such that the computational complexity can be minimized, see [Citation8] for more details.

3. Main results

In this section, Theorem 3.1 in [Citation8] is slightly generalized. Then the convergence rate and computational complexity of the truncated EM method combined with the MLMC method are studied.

3.1. Generalized theorem for the MLMC method

Theorem 3.1

If there exist independent estimators based on

Monte Carlo samples, and positive constants

such that

the computational complexity of

, denoted by

is bounded by

then there exists a positive constant such that for any

the multi-level estimator

has a mean square error

MSE

Furthermore, the upper bound of computational complexity of Y, denoted by C, is given by

for

,

for

, and

for

.

The proof is in the appendix.

Remark 3.1

The main difference of Theorem 3.1 and Theorem 3.1 in [Citation8] lies in the first condition. In [Citation8], one needs . In this paper, this requirement is weaken by any

.

3.2. Specific theorem for truncated Euler with the MLMC

Next we consider the MLMC path simulation with truncated EM method and discuss their computational complexity using Theorem 3.1.

From Theorem 3.8 in [Citation22], under Assumptions 2.1–2.4, for every small , where

and for any real number T>0, we have

(11)

(11) for

. If

, by using the Holder inequality, we also know that

so we can obtain

(12)

(12) with the polynomial growth condition (Equation9

(9)

(9) ). This implies that

for the truncated EM scheme.

Next we consider the variance of . It follows that

(13)

(13) using Equations (Equation9

(9)

(9) ) and (Equation11

(11)

(11) ).

In addition, it can be noted that

thus we have

where the fact

from Equation (Equation3

(3)

(3) ) is used.

Now we have

So we have

for the truncated EM method.

According to the Theorem 3.1, it is easy to find that the upper bound of the computational complexity of Y is

4. Numerical simulations

To illustrate the theoretical results, we consider a nonlinear scalar SDE

(14)

(14) where

is a scalar Brownian motion. This is a specified Lewis stochastic volatility model. According to Examples 3.5 and 3.9 in [Citation22], we sample over 1000 discretized Brownian paths and use stepsizes

for

in the truncated EM method. Let

denote the sample value of

. Here we set T=1 and

.

Firstly, we show some computational results of the classic EM method with the MLMC method.

It can be seen from Table that the simulation result of (Equation14(14)

(14) ) computed by the MLMC approach together with the classic EM method is divergent.

Table 1. Numerical results using the MLMC with the classic EM method.

The simulation results using the MLMC method combined with the truncated EM method is presented in Table . It is clear that some convergent trend is displayed.

Table 2. Numerical results using the MLMC with the truncated EM method.

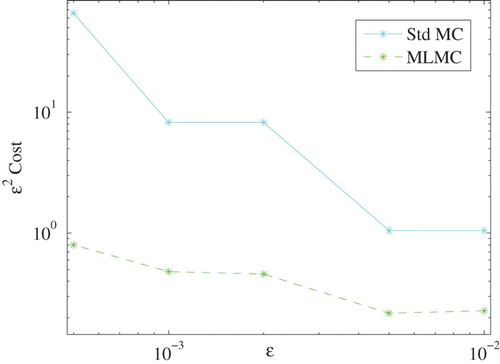

Next, it is noted that compared with the standard Monte Carlo method the computational cost can be saved by using MLMC method. From Figure , we can see that the MLMC method is approximately 10 times more efficient than the standard Monte Carlo method when is sufficient small.

Acknowledgements

The authors would like to thank the referee and editor for their very useful comments and suggestions, which have helped to improve this paper a lot.

Disclosure statement

No potential conflict of interest was reported by the authors.

ORCID

Additional information

Funding

References

- Y. Ait-Sahalia, Testing continuous-time models of the spot interest rate, Rev. Financ. Stud. 9 (1996), pp. 385–426. doi: 10.1093/rfs/9.2.385

- E. Allen, Modeling with Itô Stochastic Differential Equations, Mathematical Modelling: Theory and Applications 22, Springer, Dordrecht, 2007.

- D.F. Anderson, D.J. Higham, and Y. Sun, Multilevel Monte Carlo for stochastic differential equations with small noise, SIAM J. Numer. Anal. 54 (2016), pp. 505–529. doi: 10.1137/15M1024664

- S. Dereich and S. Li, Multilevel Monte Carlo for Lévy-driven SDEs: Central limit theorems for adaptive Euler schemes, Ann. Appl. Probab. 26 (2016), pp. 136–185. doi: 10.1214/14-AAP1087

- S. Dereich and S. Li, Multilevel Monte Carlo implementation for SDEs driven by truncated stable processes, in Monte Carlo and Quasi-Monte Carlo Methods, R. Cools and D. Nuyens, eds., Springer, 2016, pp. 3–27.

- C.W. Gardiner, Handbook of Stochastic Methods for Physics, Chemistry and the Natural Sciences, 3rd ed., Springer Series in Synergetics 13, Springer-Verlag, Berlin, 2004.

- M.B. Giles, Improved multilevel monte carlo convergence using the Milstein scheme, in Monte Carlo and Quasi-Monte Carlo Methods 2006, A. Keller, S. Heinrich, and H. Niederreiter eds., Springer, Berlin, Heidelberg, 2008, pp. 343–358.

- M.B. Giles, Multilevel monte carlo path simulation, Oper. Res. 56 (2008), pp. 607–617. doi: 10.1287/opre.1070.0496

- M.B. Giles, Multilevel Monte Carlo methods, Acta Numer. 24 (2015), pp. 259–328. doi: 10.1017/S096249291500001X

- A. Gray, D. Greenhalgh, L. Hu, X. Mao, and J. Pan, A stochastic differential equation SIS epidemic model, SIAM J. Appl. Math. 71 (2011), pp. 876–902. doi: 10.1137/10081856X

- Q. Guo, W. Liu, X. Mao, and R. Yue, The partially truncated Euler–Maruyama method and its stability and boundedness, Appl. Numer. Math. 115 (2017), pp. 235–251. doi: 10.1016/j.apnum.2017.01.010

- N. Halidias, Constructing positivity preserving numerical schemes for the two-factor CIR model, Monte Carlo Methods Appl. 21 (2015), pp. 313–323.

- D.J. Higham, X. Mao, and A.M. Stuart, Strong convergence of Euler-type methods for nonlinear stochastic differential equations, SIAM J. Numer. Anal. 40 (2002), pp. 1041–1063. doi: 10.1137/S0036142901389530

- Y. Hu, Semi-implicit Euler-Maruyama scheme for stiff stochastic equations, in Stochastic Analysis and Related Topics, V (Silivri, 1994), H. Körezlioğlu, B. Øksendal, and A.S. Üstünel, eds., Progr. Probab., Vol. 38, Birkhäuser Boston, Boston, MA, 1996, pp. 183–202.

- M. Hutzenthaler and A. Jentzen, Numerical approximations of stochastic differential equations with non-globally Lipschitz continuous coefficients, Mem. Amer. Math. Soc. 236 (2015), pp. v+99.

- M. Hutzenthaler, A. Jentzen, and P.E. Kloeden, Strong convergence of an explicit numerical method for SDEs with nonglobally Lipschitz continuous coefficients, Ann. Appl. Probab. 22 (2012), pp. 1611–1641. doi: 10.1214/11-AAP803

- M. Hutzenthaler, A. Jentzen, and P.E. Kloeden, Divergence of the multilevel Monte Carlo Euler method for nonlinear stochastic differential equations, Ann. Appl. Probab. 23 (2013), pp. 1913–1966. doi: 10.1214/12-AAP890

- P. E. Kloeden and E. Platen, Numerical Solution of Stochastic Differential Equations, Applications of Mathematics (New York) Vol. 23, Springer-Verlag, Berlin, 1992.

- W. Liu and X. Mao, Strong convergence of the stopped Euler–Maruyama method for nonlinear stochastic differential equations, Appl. Math. Comput. 223 (2013), pp. 389–400.

- X. Mao, Stochastic Differential Equations and Applications, 2nd ed., Horwood Publishing Limited, Chichester, 2008.

- X. Mao, The truncated Euler–Maruyama method for stochastic differential equations, J. Comput. Appl. Math. 290 (2015), pp. 370–384. doi: 10.1016/j.cam.2015.06.002

- X. Mao, Convergence rates of the truncated Euler–Maruyama method for stochastic differential equations, J. Comput. Appl. Math. 296 (2016), pp. 362–375. doi: 10.1016/j.cam.2015.09.035

- G.N. Milstein and M.V. Tretyakov, Stochastic Numerics for Mathematical Physics, Springer-Verlag, Berlin, 2004.

- Y. Niu, K. Burrage, and L. Chen, Modelling biochemical reaction systems by stochastic differential equations with reflection, J. Theoret. Biol. 396 (2016), pp. 90–104. doi: 10.1016/j.jtbi.2016.02.010

- B. Øksendal, Stochastic Differential Equations, 6th ed., Universitext Springer-Verlag, Berlin, 2003, an introduction with applications.

- E. Platen and D. Heath, A Benchmark Approach to Quantitative Finance, Springer Finance, Springer-Verlag, Berlin, 2006.

- S. Sabanis, A note on tamed Euler approximations, Electron. Comm. Probab. 18 (2013), pp. 47, 10. doi: 10.1214/ECP.v18-2824

- L. Szpruch, X. Mao, D.J. Higham, and J. Pan, Numerical simulation of a strongly nonlinear Ait-Sahalia-type interest rate model, BIT 51 (2011), pp. 405–425. doi: 10.1007/s10543-010-0288-y

- M.V. Tretyakov and Z. Zhang, A fundamental mean-square convergence theorem for SDEs with locally Lipschitz coefficients and its applications, SIAM J. Numer. Anal. 51 (2013), pp. 3135–3162. doi: 10.1137/120902318

- X. Wang and S. Gan, The tamed Milstein method for commutative stochastic differential equations with non-globally Lipschitz continuous coefficients, J. Difference Equ. Appl. 19 (2013), pp. 466–490. doi: 10.1080/10236198.2012.656617

- C. Yue, C. Huang, and F. Jiang, Strong convergence of split-step theta methods for non-autonomous stochastic differential equations, Int. J. Comput. Math. 91 (2014), pp. 2260–2275. doi: 10.1080/00207160.2013.871541

Appendix

Proof

Proof of Theorem 3.1

Using the notation to denote the unique integer n satisfying the inequalities

, we start by choosing L to be

so that

Hence, by the condition 1 and 2 we have

(A1)

(A1) Therefore, we have

This upper bound on the square of bias error together with the upper bound of

on the variance of the estimator, which will be proved later, gives a upper bound of

to the MSE.

Noting

using the standard result for a geometric series and the inequality

, we can obtain

Then, we have

(A2)

(A2)

We now consider the different possible values of β and to compare them to the α.

(a) If , we set

so that

which is the required.

For the bound of the computational complexity C, we have

According to the definition of L, we have

Given that

for

, we have

where

Hence, the computation complexity is bounded by

So if

, we have

If

, we have

(b) For , setting

then we have

Using the stand result for a geometric series

(A3)

(A3) we obtain that the upper bound of variance is

. So the computation complexity is bounded by

So when

, we have

When

, we have

(c) For , setting

then we have

Because

(A4)

(A4) we obtain the upper bound on the variance of the estimator to be

.

Finally, using the upper bound of , the computational complexity is

where (A4) is used in the last inequality.

Moreover, because of the inequality , we have

then

If

, then

, so we have

If

, then

, so we have