?Mathematical formulae have been encoded as MathML and are displayed in this HTML version using MathJax in order to improve their display. Uncheck the box to turn MathJax off. This feature requires Javascript. Click on a formula to zoom.

?Mathematical formulae have been encoded as MathML and are displayed in this HTML version using MathJax in order to improve their display. Uncheck the box to turn MathJax off. This feature requires Javascript. Click on a formula to zoom.ABSTRACT

We consider the problem of computing the initial condition for a general parabolic equation from the Cauchy lateral data. The stability of this problem is well-known to be logarithmic. In this paper, we introduce an approximate model, as a coupled linear system of elliptic partial differential equations. Solution to this model is the vector of Fourier coefficients of the solutions to the parabolic equation above. This approximate model is solved by the quasi-reversibility method. We will prove the convergence for the quasi-reversibility method as the measurement noise tends to 0. The convergent rate is Lipschitz. We present the implementation of our algorithm in details and verify our method by showing some numerical examples.

1. Introduction

Let be the spatial dimension and Ω be a open and bounded domain in

. Assume that

is smooth. Let

(1)

(1) satisfy the following conditions

A is symmetric; i.e.

for all

A is uniformly elliptic; i.e. there exists a positive number μ such that

(2)

(2)

Let and

. Define

(3)

(3) for all functions

. Consider the initial value problem

(4)

(4) where

represents an initial source with support compactly contained in Ω. We refer the reader to the books [Citation1,Citation2]. The main aim of this paper is to solve the following problem.

problem 1.1

Let T>0. Given the Cauchy boundary data

(5)

(5) for

determine the function

. Here ν is the outward normal to

Problem 1.1 is the problem of recovering the initial condition of the parabolic equation from the lateral Cauchy data. This problem has many real-world applications; e.g. determine the spatially distributed temperature inside a solid from the boundary measurement of the heat and heat flux in the time domain [Citation3]; identify the pollution on the surface of the rivers or lakes [Citation4]; effectively monitor the heat conductive processes in steel industries, glass and polymer forming and nuclear power station [Citation5]. Due to its realistic applications, this problem has been studied intensively. The uniqueness of Problem 1.1 is well-known, see [Citation6]. Also, it can be reduced from the logarithmic stability results in [Citation3,Citation5]. The natural approach to solve this problem is the optimal control method; that means, minimize a mismatch functional. The proof of the convergence of the optimal control method to the true solution to these inverse problems is challenging and is omitted. One of our contributions to the field is the convergence of the quasi-reversibility method, which our method is relied on, as the measurement noise tends to 0.

Related to the inverse problem in the current paper, the problem of recovering the initial conditions for hyperbolic equation is very interesting since it arises in many real-world applications. For instance the problems thermo and photo acoustic tomography play the key roles in bio-medical imaging. We refer the reader to some important works in this field [Citation7–9]. Applying the Fourier transform, one can reduce the problem of reconstructing the initial conditions for hyperbolic equations to some inverse source problems for the Helmholtz equation, see [Citation10–14] for some recent results.

In this paper, we employ the technique developed by our own research group. The main point of this technique is to derive an approximate model for the Fourier coefficients of the solution to the governing partial differential equation. This technique was first introduced in [Citation15]. This approximate model is a system of elliptic equations. It, together with Cauchy boundary data, is solved by the quasi-reversibility method. This approach was used to solve an inverse source problem for Helmholtz equation [Citation10] and to inverse the Radon transform with incomplete data [Citation16]. Especially, Klibanov et al. [Citation17] used the convexification method, a stronger version of this technique, to compute numerical solutions to the nonlinear problem of electrical impedance tomography with restricted Dirichlet-to-Neumann map data. It is remarkable mentioning that the numerical solutions in [Citation17] due to the convexification method are impressive.

As mentioned in the previous paragraph, we employ the quasi-reversibility method to solve an approximate model for Fourier coefficients of the solution to (Equation4(4)

(4) ). This method was first introduced by Lattés and Lions [Citation18]. It is used to computed numerical solutions to ill-posed problems for partial differential equations. Due to its strength, since then, the quasi-reversibility method attracts the great attention of the scientific community see e.g. [Citation19–28]. We refer the reader to [Citation29] for a survey on this method. The solution of the approximate model in the previous paragraph due to the quasi-reversibility method is called regularized solution in the theory of ill-posed problems [Citation30]. A question arises immediately about the convergence of the quasi-reversibility method: whether or not the regularized solutions obtained by the quasi-reversibility method converges to the true solution of our system of partial differential equations as the noise tends to 0. The affirmative answer to this question is obtained using a general Carleman estimate. Moreover, we employ a Carleman estimate to prove that the convergence rate is Lipschitz. It is important mentioning that in the celebrate paper [Citation31], Bukhgeim and Klibanov discovered the use of Carleman estimate in studying inverse problems for all three main types of partial differential equations.

The paper is organized as follows. In Section 2, we describe our approach and propose an algorithm to solve Problem 1.1. In Section 3, we employ prove a Carleman estimate. Then, in Section 4, we study the convergence of the quasi-reversibility method as the noise tends to 0. Finally, in Section 5, we present all details about the numerical implementation and then show some numerical results from highly noisy simulated data.

2. The algorithm to solve Problem 1.1

We will employ the following basis to introduce an approximation model.

2.1. An orthonormal basis of

and the truncated Fourier series

and the truncated Fourier series

For each n>1, define a complete sequence in

with

(6)

(6) where

Using the Gram–Schmidt orthonormalization for the sequence

, we can construct an orthonormal basis of

named as

. For each n, the function

takes the form

(7)

(7) where

is a polynomial of the

order. For each

we consider

as a function with respect to t. The Fourier series of this function is

(8)

(8) where

(9)

(9) Fix a positive integer N. We truncate the Fourier series in (Equation8

(8)

(8) ). The function

is approximated by

(10)

(10) In this context, the partial derivative with respect to t of

is approximated by

(11)

(11) for all

and

To reconstruct the wave field , we compute the Fourier coefficients

,

. It is obvious that (Equation10

(10)

(10) ) and (Equation11

(11)

(11) ) play crucial roles in this step. We; therefore, require that the function

cannot be identically 0. The usual ‘sin and cosine’ basis of the Fourier transform does not meet this requirement while it is not hard to verify from (Equation7

(7)

(7) ) that the basis

does. The basis

was first introduced in [Citation15]. Then, this basis was successfully used to solve several important inverse problems, including the inverse source problem for Helmholtz equations [Citation10], inverse X-ray tomographic problem in incomplete data [Citation16] and the nonlinear inverse problem of electrical impedance tomography with restricted Dirichlet to Neumann map data, see [Citation17].

2.2. An approximate model

We introduce in this subsection a coupled system of elliptic partial differential equations without the presence of the unknown function . Plugging (Equation10

(10)

(10) ) and (Equation11

(11)

(11) ) into (Equation4

(4)

(4) ), we have

(12)

(12) for all

and

For each

, multiply

to both sides of (Equation12

(12)

(12) ) and then integrating the obtained equation with respect to t, we obtain

(13)

(13) for all

in Ω. Denote by

(14)

(14) and note that

(15)

(15) We rewrite (Equation13

(13)

(13) ) as

(16)

(16) Denote

(17)

(17) It follows from (Equation16

(16)

(16) ) that

(18)

(18) where S is the

matrix whose

entry is given in (Equation14

(14)

(14) ),

. Here, the operator

acting on the vector

is understood in the same manner as it acts on scalar valued function, see (Equation3

(3)

(3) ).

On the other hand, due to (Equation9(9)

(9) ) and (Equation5

(5)

(5) ), the vector

satisfies the boundary conditions

(19)

(19)

(20)

(20)

for all

Remark 2.1

From now on, we consider and

as our ‘indirect’ boundary data. This is acceptable since these two functions can be computed directly by the algebraic formulas (Equation19

(19)

(19) ) and (Equation20

(20)

(20) ).

Finding a vector satisfying Equation (Equation18

(18)

(18) ) and constraints (Equation19

(19)

(19) ) and (Equation20

(20)

(20) ) is the main point in our numerical method to find the function

. In fact, having

in hand, we can compute the function

via (Equation10

(10)

(10) ). The desired function

is given by

Due to the truncation step in (Equation10(10)

(10) ), Equation (Equation18

(18)

(18) ) is not exact. We call it an approximate model. Solving it, together with the ‘over-determined’ boundary conditions (Equation19

(19)

(19) ) and (Equation20

(20)

(20) ), for the Fourier coefficients

of

,

,

, might not be rigorous. In fact, proving the ‘accuracy’ of (Equation18

(18)

(18) ) when

is extremely challenging and is out of the scope of this paper. However, we experience in many earlier works that the solution of (Equation18

(18)

(18) ), (Equation19

(19)

(19) ) and (Equation20

(20)

(20) ) well approximates Fourier coefficients of the function

, leading to good solutions of variety kinds of inverse problems, see [Citation10,Citation16,Citation17,Citation32].

Remark 2.2

The choice of N

On , we arrange

grid points

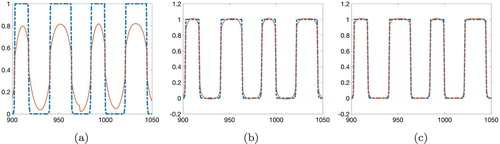

. In Figure displays the functions of

and its approximation

where

is the true solution of the forward problem and

,

, is computed using (Equation9

(9)

(9) ). This numerical experiment suggests us to take N=30. It is worth mentioning that when

, the numerical solutions are not satisfactory, when N=30, numerical results are quite accurate regardless the high noise levels and when

the computation is time-consuming.

Figure 1. The function (dash-dot) and its approximation

(solid) at the points numbered from 900 to 1050. These functions are taken from Test 4 in Section 5.2. It is evident that the larger N, the better approximation for the function u is obtained by the

partial sum of the Fourier series in (Equation8

(8)

(8) ): (a) N=10, (b) N=20, (c) N=30.

2.3. The quasi-reversibility method

As mentioned, our method to solve Problem 1.1 is based on a numerical solver for (Equation18(18)

(18) ), (Equation19

(19)

(19) ) and (Equation20

(20)

(20) ). We do so by employing the quasi-reversibility method; that means, we minimize the functional

(21)

(21) subject to the constraints (Equation19

(19)

(19) ) and (Equation20

(20)

(20) ). Here ε is a positive number serving as a regularization parameter. Impose the condition that the set of admissible data

(22)

(22) is nonempty, where

and

are our indirect data, see Remark 2.1, defined in (Equation19

(19)

(19) ) and (Equation20

(20)

(20) ). The result below guarantees the existence and uniqueness for the minimizer of

,

.

Proposition 2.3

Assume that the set of admissible data H, defined in (Equation22(22)

(22) ), is nonempty. Then, for all

the functional

admits a unique minimizer satisfying (Equation19

(19)

(19) ) and (Equation20

(20)

(20) ). This minimizer is called the regularized solution to (Equation18

(18)

(18) ), (Equation19

(19)

(19) ) and (Equation20

(20)

(20) ).

Proof.

Proposition 2.3 is an analogue of [Citation10, Theorem 3.1] whose proof is based on the Riesz representation theorem. An alternative method to prove this proposition is from the standard argument in convex analysis, see e.g. [Citation28,Citation33].

The minimizer of in H is called the regularized solution of (Equation18

(18)

(18) ), (Equation19

(19)

(19) ) and (Equation20

(20)

(20) ) obtained by the quasi-reversibility method.

The analysis above leads to Algorithm 1, which describes our numerical method to reconstruct the function ,

. In the next section, we establish a new Carleman estimate. This estimate plays an important role in proving the convergence of the regularized solution, due to the quasi-reversibility method, to the true solution of (Equation18

(18)

(18) ), (Equation19

(19)

(19) ) and (Equation20

(20)

(20) ) in Section 4 as the measurement noise and ε tend to 0.

3. A Carleman estimate for second order elliptic operators on general domains

Let the matrix A be as in (Equation1(1)

(1) ). The main aim of this section is to prove a Carleman estimate in a general domain Ω. Similar versions of Carleman estimate can be found in [Citation17, Theorem 3.1] and [Citation34, Lemma 5] when Ω is an annulus and [Citation10, Theorem 4.1] and when Ω is a cube. In this paper, we will use the following estimate to derive the convergence of the quasi-reversibility method. It can be deduced from [Citation6, Lemma 3, Chapter 4, § 1].

Without lost of generality, we can assume that

(23)

(23) for some 0<X<1. Define the function

(24)

(24) Using Lemma 3 in [Citation6, Chapter 4, § 1] for the function

that is independent of the time variable, we can find a constant

and a constant

(depending only on α and the entries

,

, of the matrix A) such that for all

and

(25)

(25)

for all

where the vector U satisfies

(26)

(26) Applying (Equation25

(25)

(25) ) and (Equation26

(26)

(26) ), we have the lemma.

Lemma 3.1

Carleman estimate

Let satisfying

(27)

(27) where ν the outward unit normal vector of

Then, there exist a positive number

and

depending only on α and A, such that

(28)

(28)

for

and

. In particular, fixing

, one can find

such that

(29)

(29)

Proof.

We claim that

(30)

(30) In fact, assume that

at some points

Since

on

, see (Equation27

(27)

(27) ),

where

is any tangent vector to

at the point

. Thus,

is perpendicular to

at

. In other words,

for some nonzero scalar θ. We have

, which is a contradiction to (Equation2

(2)

(2) ).

Integrating both sides of (Equation25(25)

(25) ), we have

(31)

(31)

Here, the term

is dropped because it vanishes due the divergence theorem, (Equation27

(27)

(27) ) and (Equation30

(30)

(30) ) Using the inequality

(32)

(32)

Combining (Equation31

(31)

(31) ) and (Equation32

(32)

(32) ), we obtain

Fixing

and choosing λ large such that the second term on the left-hand side dominates the first term on the right-hand side, we obtain

The estimate (Equation28

(28)

(28) ) follows.

4. The convergence of the quasi-reversibility method

In this section, we continue to assume (Equation23(23)

(23) ). Let

and

be the noiseless data for (Equation19

(19)

(19) ) and (Equation20

(20)

(20) ), see Remark (2.1), respectively. The noisy data are denoted by

and

. Here δ is the noise level. In this section, assume that there exists

such that

for all

(33)

(33)

and the bound

(34)

(34) holds true.

The assumption about the existence of satisfying (Equation33

(33)

(33) ) and (Equation34

(34)

(34) ) is equivalent to the condition

In this section, we establish the following result to study the accuracy of the quasi-reversibility method.

Theorem 4.1

Assume that is the function that satisfies (Equation18

(18)

(18) ), (Equation19

(19)

(19) ) and (Equation20

(20)

(20) ) with

and

replaced by

and

respectively. Fix

Let

be the minimizer of

subject to constraints (Equation19

(19)

(19) ) and (Equation20

(20)

(20) ) with

and

replaced by

and

respectively. Assume further that there is an ‘error’ function

in

satisfying (Equation33

(33)

(33) ) and (Equation34

(34)

(34) ). Then, we have the estimate

(35)

(35) where C is a constant that depends only on Ω,

and μ.

Proof.

Since is the minimizer of

, by the variational principle, we have

(36)

(36) for all test functions Φ in the space

Since

we can deduce from (Equation36

(36)

(36) ) that

Plugging the test function

(37)

(37) into the identity above, we have

Applying the Cauchy–Schwartz inequality and removing lower order terms, we obtain

(38)

(38) Recall from (Equation3

(3)

(3) ) that

Recall the function ψ in (Equation24

(24)

(24) ). Fix

and

as in Lemma 3.1. Set

We have

Using the inequality

, we have

Hence, thus, by (Equation29

(29)

(29) ),

(39)

(39)

Now, fixing

large, we obtain from (Equation39

(39)

(39) ) that

(40)

(40) Here, we have used the boundedness of

and c in Ω. Combining (Equation37

(37)

(37) ), (Equation38

(38)

(38) ) and (Equation40

(40)

(40) ) gives

This and the assumption

imply inequality (Equation35

(35)

(35) ).

Corollary 4.2

Let and

where

and

are computed from

and

via (Equation8

(8)

(8) ) and (Equation17

(17)

(17) ). Then, by the trace theory

5. Numerical illustrations

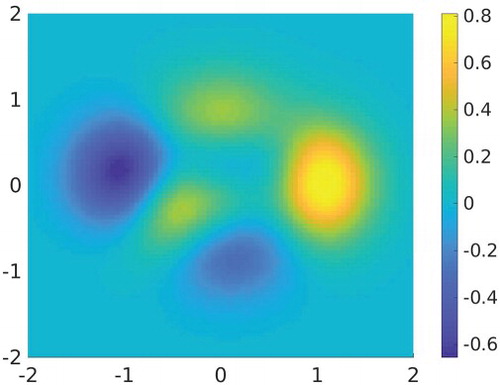

We numerically test our method when d=2. The domain Ω is the square . In this section, we write

. For the coefficients of the governing equation, we choose, for simplicity,

and

The function c is set as

which is a scale of the ‘peaks’ function in Matlab. The graph of c is displayed in Figure .

Define a grid of points in Ω

where

and

For the time variable, we choose T=4. Define a uniform partition of

as

with step size

. In our tests,

The forward problem is solved by finite difference method in the implicit scheme. Denote by

the solution of the forward problem. The data are given by

for

where

is the uniformly distributed random function taking value in

and δ is the noise level. The noise level δ is given in each numerical tests.

5.1. The implementation for Algorithm 1

The main part of this section is to compute the minimizer U of subject to the constraints (Equation19

(19)

(19) ) and (Equation20

(20)

(20) ). The ‘cut-off’ number N is set to be 30, see Remark 2.2 for this choice of N. To construct the orthonormal basis

for each

, we identify the function

, defined in (Equation37

(37)

(37) ), by the

dimensional vector

. Then apply the Gram–Schmidt orthogonalization process for the set

in the

dimensional Euclidian space. In other words, we construct

in the finite difference scheme. The discretized version of

,

is

Hence,

, see (Equation21

(21)

(21) ), is approximated by

(41)

(41)

Here, we slightly change the

norm of the regularity term to the

norm. This makes the computational codes less heavy. The numerical results with this change are still acceptable. We also modify the regularized parameter of the term

to be

, instead of ε, since we observe that the obtained numerical results are more accurate with this modification. To numerically prove this, we solve the inverse problem when the function

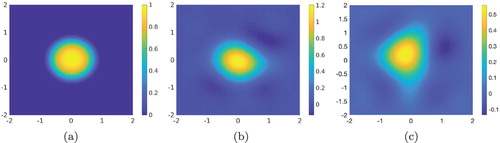

is given in Test 1 in Section 5.2 in two cases: with and without this modification and then compare the corresponding outputs. The results are displayed in Figure . It is clear from Figure that the modification above provides better numerical results.

Figure 3. Test 1. The comparison of the reconstruction of the function f with and without the modification for the regularized parameter. It is evident that the numerical result in (b) is significantly better than that in (c) in both reconstructed shape and computed value. (a) The function . (b)

computed using the regularization term

when

. (c)

computed using the regularization term

when

.

The expression in (Equation41(41)

(41) ) is simplified as follows:

(42)

(42)

Here, we use the Kronecker number

for the convenience of writing the computational codes. We next identify

with the

dimensional vector

according to the rule

where the index

is

Then, with this notation,

in (Equation42

(42)

(42) ) is rewritten as

The

matrices

,

and

are as follows.

Define the matrix

For

, for some

, the

entry of

is

if

if

or

,

0 otherwise.

Define the matrix

. For

, for some

, the

entry of

is

if

,

if

,

0 otherwise.

Define the matrix

. For

, for some

, the

entry of

is

if

,

if

,

0 otherwise.

Remark 5.1

The values of the parameters

As mentioned, we take N=30, ,

, R=2. The regularized parameter

. These values of parameters are used for all tests in Section 5.2.

5.2. Tests

We perform four (4) numerical examples in this paper. These examples with high levels of noise show the strength of our method. We will also compare the reconstructed maximum values of the reconstructed functions and the true ones. Below, and

are, respectively, the true source function and the reconstructed one due to Algorithm 1 with the parameters in Section 5.1.

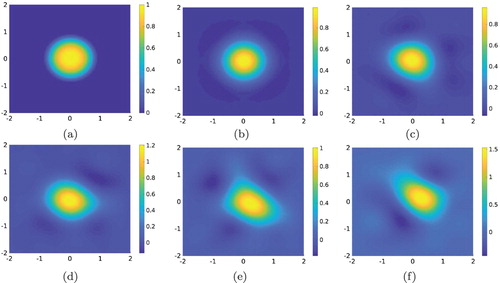

Test 1. The case of one inclusion. The function

is a smooth function supported in a disk with radius 1 centred at the origin. More precisely,

Figure displays the functions

and

. Table show the reconstructed value of the function

and the relative error. The noise levels are

,

,

,

and

Table 1. Test 1. Correct and computed maximal values of source functions.

Figure 4. Test 1. The true and computed source functions. Our method still works well when

It is shown in (e) that the reconstructed value of

with

is quite accurate, even better than in (d), but in contrast, the reconstructed shape starts to break down. (a) The function

. (b)

,

. (c)

,

. (d)

,

. (e)

,

. (f)

,

.

It is evident that our method is robust for Test 1 in the sense that the reconstructed maximal value of the function f and the reconstructed shape and position of the inclusion are quite accurate.

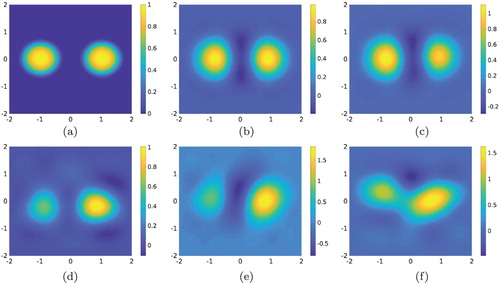

Test 2. The case of two inclusions. The function

is a smooth function supported in two disks with radius r=0.8 centred at

and

respectively. The function

is given by the formula

Figure displays the functions

and

. Table show the reconstructed value of the function

and the relative error. The noise levels are

,

,

,

and

The reconstruction in Test 2 is good. In this test, the reconstruct breaks down when the noise level is

although we are able to detect the inclusions with higher noise levels.

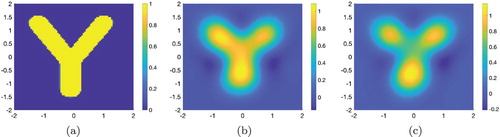

Test 3. The case of non-inclusion and nonsmooth function. The function

is the characteristic function of the letter Y. Figure displays the functions

and

. The noise levels are

and

Table 2. Test 2. Correct and computed maximal values of the inclusions.

Figure 5. Test 2. The true and computed source functions. The reconstruction of the two inclusions are not symmetric probably because the true function c, see Figure for its graph, is negative on the left and positive on the right. However, both inclusions can be seen when the noise level goes up to

. (a) The function

. (b)

,

. (c)

,

. (d)

,

. (e)

,

. (f)

,

.

Figure 6. Test 3. The true and computed source functions. The letter Y can be detected well in this case. The true maximal value of

is 1. The computed maximal value of

when

is 1.09 (relative error 9%). The computed maximal value of

when

is 1.15 (relative error 15%). (a) The function

. (b)

,

. (c)

,

.

We can reconstruct the letter Y and the reconstructed maximal of

is good when

but the error is large when the noise level reaches

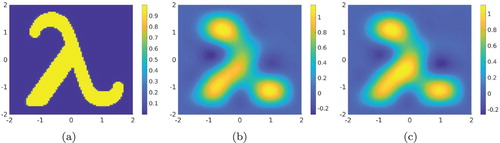

Test 4. The case of non-inclusion and nonsmooth function. The function

is the characteristic function of the letter λ. Figure displays the functions

and

. The noise levels are

and

Figure 7. Test 4. The true and computed source functions. The reconstruction of λ is acceptable. The true maximal value of

is 1. The computed maximal value of

when

is 1.16 (relative error 16%). The computed maximal value of

when

is 1.11 (relative error 11%). (a) The function

. (b)

,

. (c)

,

.

The image of λ in Test 4 is acceptable. The reconstructed maximal value in Figure (c) is better than that in Figure (b) but the reconstruction of λ in Figure (c) is not as good as that in Figure (b).

6. Concluding remarks

In this paper, we have solved the problem of reconstructing the initial condition of solution to a general class of parabolic equation from the measurement of lateral Cauchy data. The main points of the method is derive an approximate model by a truncation of the Fourier series with respect to a special basis. We solved the approximation model by the quasi-reversibility method. The convergence of this method when the noise tends to 0 was proved. More importantly, numerical examples show that our method is robust when proving accurate reconstructions of the unknown source function from highly noisy data.

Although our method leads to good numerical results, it has a drawback. The proof of the ‘convergence’ of the system (Equation18(18)

(18) ) as

is challenging and is omitted in this paper. We refer the reader to [Citation29, Section 4] for an alternative approach to solve Problem 1.1 by which we can avoid this non-rigorousness. This method is based on the Carleman estimate for parabolic operators. However, in this case we can determine a ‘near’ initial condition for the function

. That means, we can recover the function

where ε is any small number. Implementation for the method in [Citation29, Section 4] is valuable. We reserve it for a future research.

Acknowledgments

The authors are grateful to Michael V. Klibanov for many fruitful discussions.

Disclosure statement

No potential conflict of interest was reported by the authors.

ORCID

Loc Hoang Nguyen http://orcid.org/0000-0002-0172-8816

Additional information

Funding

References

- Evans LC. Partial differential equations. Providence (RI): American Mathematical Society; 2010 (Graduate studies in mathematics; 19).

- Ladyzhenskaya OA. The boundary value problems of mathematical physics. New York (NY): Springer-Verlag; 1985.

- Klibanov MV. Estimates of initial conditions of parabolic equations and inequalities via lateral Cauchy data. Inverse Probl. 2006;22:495–514. doi: 10.1088/0266-5611/22/2/007

- El Badia A, Ha-Duong T. On an inverse source problem for the heat equation. Application to a pollution detection problem. J Inverse Ill-posed Probl. 2002;10:585–599. doi: 10.1515/jiip.2002.10.6.585

- Li J, Yamamoto M, Zou J. Conditional stability and numerical reconstruction of initial temperature. Commun Pure Appl Anal. 2009;8:361–382.

- Lavrent'ev MM, Romanov VG, Shishatski˘ SP. Ill-posed problems of mathematical physics and analysis. Providence (RI): AMS; 1986 (Translations of mathematical monographs).

- Liu H, Uhlmann G. Determining both sound speed and internal source in thermo- and photo-acoustic tomography. Inverse Probl. 2015;31:105005.

- Katsnelson V, Nguyen LV. On the convergence of time reversal method for thermoacoustic tomography in elastic media. Appl Math Lett. 2018;77:79–86. doi: 10.1016/j.aml.2017.10.004

- Haltmeier M, Nguyen LV. Analysis of iterative methods in photoacoustic tomography with variable sound speed. SIAM J Imaging Sci. 2017;10:751–781. doi: 10.1137/16M1104822

- Nguyen LH, Li Q, Klibanov MV. A convergent numerical method for a multi-frequency inverse source problem in inhomogenous media. Inverse Probl Imaging, preprint, arXiv:190110047. 2019.

- Wang X, Guo Y, Zhang D, et al. Fourier method for recovering acoustic sources from multi-frequency far-field data. Inverse Probl. 2017;33:035001.

- Wang X, Guo Y, Li J, et al. Mathematical design of a novel input/instruction device using a moving acoustic emitter. Inverse Probl. 2017;33:105009.

- Li J, Liu H, Sun H. On a gesture-computing technique using eletromagnetic waves. Inverse Probl Imaging. 2018;12:677–696. doi: 10.3934/ipi.2018029

- Zhang D, Guo Y, Li J, et al. Retrieval of acoustic sources from multi-frequency phaseless data. Inverse Probl. 2018;34:094001.

- Klibanov MV. Convexification of restricted Dirichlet to Neumann map. J Inverse Ill-Posed Probl. 2017;25(5):669–685. doi: 10.1515/jiip-2017-0067

- Klibanov MV, Nguyen LH. PDE-based numerical method for a limited angle X-ray tomography. Inverse Probl. 2019;35:045009.

- Klibanov MV, Li J, Zhang W. Convexification for the inversion of a time dependent wave front in a heterogeneous medium. Inverse Probl. 2019;35:035005.

- Lattès R, Lions JL. The method of quasireversibility: applications to partial differential equations. New York (NY): Elsevier; 1969.

- Bécache E, Bourgeois L, Franceschini L, et al. Application of mixed formulations of quasi-reversibility to solve ill-posed problems for heat and wave equations: the 1D case. Inverse Probl Imaging. 2015;9(4):971–1002. doi: 10.3934/ipi.2015.9.971

- Bourgeois L. Convergence rates for the quasi-reversibility method to solve the Cauchy problem for Laplace's equation. Inverse Probl. 2006;22:413–430. doi: 10.1088/0266-5611/22/2/002

- Bourgeois L, Dardé J. A duality-based method of quasi-reversibility to solve the Cauchy problem in the presence of noisy data. Inverse Probl. 2010;26:095016. doi: 10.1088/0266-5611/26/9/095016

- Bourgeois L, Ponomarev D, Dardé J. An inverse obstacle problem for the wave equation in a finite time domain. Inverse Probl Imaging. 2019;13(2):377–400. doi: 10.3934/ipi.2019019

- Clason C, Klibanov MV. The quasi-reversibility method for thermoacoustic tomography in a heterogeneous medium. SIAM J Sci Comput. 2007;30:1–23. doi: 10.1137/06066970X

- Dardé J. Iterated quasi-reversibility method applied to elliptic and parabolic data completion problems. Inverse Probl Imaging. 2016;10:379–407. doi: 10.3934/ipi.2016005

- Kaltenbacher B, Rundell W. Regularization of a backwards parabolic equation by fractional operators. Inverse Probl Imaging. 2019;13(2):401–430. doi: 10.3934/ipi.2019020

- Klibanov MV, Santosa F. A computational quasi-reversibility method for Cauchy problems for Laplace's equation. SIAM J Appl Math. 1991;51:1653–1675. doi: 10.1137/0151085

- Klibanov MV. Carleman estimates for global uniqueness, stability and numerical methods for coefficient inverse problems. J Inverse Ill-Posed Probl. 2013;21:477–560. doi: 10.1515/jip-2012-0072

- Nguyen LH. An inverse space-dependent source problem for hyperbolic equations and the Lipschitz-like convergence of the quasi-reversibility method. Inverse Probl. 2019;35:035007.

- Klibanov MV. Carleman estimates for the regularization of ill-posed Cauchy problems. Appl Numer Math. 2015;94:46–74. doi: 10.1016/j.apnum.2015.02.003

- Tikhonov AN, Goncharsky A, Stepanov VV, et al. Numerical methods for the solution of ill-posed problems. Dordrecht: Kluwer Academic Publishers Group; 1995.

- Bukhgeim AL, Klibanov MV. Uniqueness in the large of a class of multidimensional inverse problems. Sov Math Dokl. 1981;17:244–247.

- Klibanov MV, Kolesov AE, Sullivan A, et al. A new version of the convexification method for a 1-D coefficient inverse problem with experimental data. Inverse Probl. 2018, to appear. doi:10.1088/1361-6420/aadbc6

- Klibanov MV, Nguyen LH, Sullivan A, et al. A globally convergent numerical method for a 1-D inverse medium problem with experimental data. Inverse Probl Imaging. 2016;10:1057–1085. doi: 10.3934/ipi.2016032

- Nguyen HM, Nguyen LH. Cloaking using complementary media for the Helmholtz equation and a three spheres inequality for second order elliptic equations. Trans Am Math Soc. 2015;2:93–112. doi: 10.1090/btran/7