?Mathematical formulae have been encoded as MathML and are displayed in this HTML version using MathJax in order to improve their display. Uncheck the box to turn MathJax off. This feature requires Javascript. Click on a formula to zoom.

?Mathematical formulae have been encoded as MathML and are displayed in this HTML version using MathJax in order to improve their display. Uncheck the box to turn MathJax off. This feature requires Javascript. Click on a formula to zoom.Abstract

The use of meta-heuristic methods for solving nonlinear engineering and optimization problems is one of the paramount topics that attracted the attention of the researchers. Particle swarm optimization (PSO) is an optimization algorithm which has inspired by birds flocking. However, like other methods, PSO has some disadvantages such as problems in finding the best global minimum or trapping in the local minima in some special problems. In some works, the initial particles are randomly set using uniform or Gaussian distributions. These particles sometimes fail to cover the search space completely. The main goal of this paper is to improve the mechanism of the initial population production stage in the first step to cover the feasible space properly. So, with the help of the scrambled Halton sequence and producing quasi-random numbers, the initial population has been generated in a mathematical way without making a lot of change in the original PSO algorithm and its structure. These particles cover the search space more efficiently. This new hybrid algorithm is named the Halton-PSO in this research. The results show that Halton-PSO improves the ability and efficiency of PSO. The performance and ability of the proposed Halton-PSO algorithm have been examined by 11 benchmark functions and 7 different nonlinear engineering problems. Both of the optimization results of test functions and the real problems demonstrate that the Halton-PSO method is more successful than the original PSO and the PSO family algorithms and the other methods for distinguishing the global best minimum.

PUBLIC INTEREST STATEMENT

In this work a very popular and common optimization method named PSO is modified. Optimization methods are widely used in many topics e.g. engineering, financial problems and etc. The main purpose is to find the minimum of a goal function. Particle Swarm Optimization is one the most famous algorithm to find the best solution in different cases. So, it is significant to improve the performance of it. In this paper we used quasi-random numbers (scrambled Halton sequence) to heighten the capability of PSO and modified it.

1. Introduction

In recent years, various optimization algorithms had been used to solve engineering problems and provide optimal solutions to them. In contrary to classical algorithms, the new optimization techniques are implemented to evaluate the non-differentiable and non-continues optimization problems. Most of the meta-heuristic methods have been acquired by inspecting and studying the behavior of the mammals live in nature (Eberhart et al., Citation2001; Yang, Citation2010a).

The exploration and exploitation capabilities are two major features that make the meta-heuristic algorithms powerful to find the global optimum. In fact, the exploration phase of an algorithm helps to search for the feasible space for new results and finding the global optimum. On the other hand, in the exploitation phase, for choosing the best solution, the algorithm searches the neighborhood of the highest quality solution. So, the balance of these two phases is very important, and an efficient algorithm provides a good exploration phase in the inception of the process and a good exploitation ability in the final stages (Yang, Citation2010b).

One of the well-known meta-heuristic optimization algorithms is the particle swarm optimization (PSO), and it is based on principles such as population, the intelligent particles, and motion. This algorithm implements the social behavior of animals such as fish and bird flocks (Kennedy & Eberhart, Citation1995). By using the comprehensive of swarm intelligence, this method makes up new solutions. Since this algorithm has a few adjustable parameters, its implementation is very convenient. These characteristics have attracted researchers from various fields of science to use this algorithms in their studies such as harmonic elimination problem in PWM inverter (R. N. Ray et al., Citation2009), oil industry (Assareh et al., Citation2010), artificial neural networks (Chau, Citation2007), optimization of reservoir operation (SaberChenari et al., Citation2016), social networks (Rui et al., Citation2017), power system stability (Khadanga & Satapathy, Citation2015) and etc. However, the PSO has two main problems: 1) some of the particles walk into a local minimum trap and cannot find new solutions, and 2) the best solution is converged in the very first steps of the optimization procedure. Overcoming these difficulties involves many researchers from all over the world and many types of PSO had been introduced. Some of these researches are teaching-learning-based optimization (Shi & Eberhart, Citation1998a), self-organizing hierarchical PSO with time-varying acceleration coefficients (Ratnaweera et al., Citation2004), adaptive acceleration coefficients (Zjyu & Dingxue, Citation2009), PSO with asymmetric time-varying acceleration coefficients (Bao & Mao, Citation2009), a mathematical model of diverse particles groups called autonomous groups (Mirjalili et al., Citation2014a), time-varying accelerator coefficient (Cui et al., Citation2008) and combination of PSO, convergence and divergence operators for problems (Mahmoodabadi et al., Citation2012).

On the other hand, some of the researchers used the ability of other optimization methods to amplify the exploration and exploitation phases of the PSO method. For instance, PSO and Ant Colony Algorithm (PS-ACO) (Shuang et al., Citation2011), GA and PSO hybrid (Gray, Citation2016; Premalatha et al., Citation2009), gravitational search method with PSO (Mirjalili & Mohd Hashim, Citation2010), PSO-dragonfly method (Sree Ranjini & Murugan, Citation2017), PSO and multi-crossover of GA and Bee Colony mechanism (HEPSO) (Mahmoodabadi et al., Citation2014), PSO with Quantum behavior (IQS-QPSO) (Amini et al., Citation2019) and PSO with sine cosine algorithm and Levy flight (PSOSCALF) (Nezamivand Chegini et al., Citation2018) are some of these investigations.

The original PSO in the initialization step scatters the particles randomly. It is expected that by improving the initialization, the final results of PSO would be more exact. A number of researchers have tried to use other methods to improve the searching strategy in PSO. Song et al. (Citation2007) used tent-map chaotic PSO (TCPSO) to avoid trapping to local minima and improve the searching performance of standard PSO. Dong et al. (Citation2012) proposed an evolutionary circle detection method based on opposition-based learning (OBL) that was employed in the Chaotic Hybrid Algorithm (CHA) for the population initialization step. An improved PSO algorithm combined with the piecewise linear chaotic map (PWLCPSO) is proposed by Xiang et al. (Citation2007).

There are various ordinary Monte Carlo methods that employ random numbers. Quasi-Monte Carlo is one of them produced highly uniform quasi-random numbers in the structure of Monte Carlo pseudorandom numbers. These methods are widely applied in many subjects especially in multidimensional domains (Niederreiter, Citation1992; Spanier & Maize, Citation1994). Halton sequence is one of the renowned low-discrepancy sequences and used in many Quasi-Monte Carlo applications. So, the Halton sequence could employ random numbers that have a mathematical structure and this strengthens the exploration phase and covers the search space by a logical method. Ganesha et al. (Citation2016) applied optimal Halton sequences to only for 4 benchmark functions with PSO to understand and obtained some results and data to make a comparison between Halton sequences family. They did not apply this method to any engineering problems.

In this paper, Halton-PSO introduced to improve the operation of the original PSO algorithm. This method is based on a combination of the Halton sequence and the PSO algorithm. In this algorithm, the initial particles are produced by the scrambled Halton sequence. This proposed method examined by 11 different benchmark functions and 7 different nonlinear engineering problems. The Halton-PSO has placed 1th in ranking among other benchmark functions and performs well in reaching the best solutions. The Halton-PSO has increased the capability of PSO in exploration phase and covers the search space very well. On the other hand, proposing a mathematical method instead of using a random number make the Halton-PSO stronger and more reliable. After that, the main spirit of PSO is still untouched and the simplicity of this method is preserved.

The rest of this paper is organized as follows: Section 2 provides a brief review of the PSO method. In Section 3, the Halton sequence and scrambled Halton sequence introduced. Section 4 introduces a combination of PSO with the scrambled Halton sequence. The benchmark functions, results of the proposed method and comparison of this algorithm with the other meta-heuristic algorithms are shown in Section 5. Section 6 shows the capability of Halton-PSO in solving engineering problems. Finally, Section 7 concludes the current study.

2. The particle swarm optimization

The PSO method is originated from simulating the social attribute of the population of birds. After data simulation, the researchers presented their optimization method in 1995 (Eberhart & Kennedy, Citation1995).

The population is defined as the following set:

where N is the number of the particle (candidate solution). The position vector for each particle is defined as follows:

It is assumed that objective function f(x) is available for all particles of so that each particle has a unique function value

. Also, all particles of the search space are frequently moving. These movements can be defined with the help of velocity definition in the following form:

where represents the number of repetitions. The position of the particle and its velocity are indicated as

and

, respectively.

The PSO memorizes the positions of particles as .

explains the best position that every particle has ever met.

The positions are in the form of and

that

is the number of repetitions.

The aim of the algorithm is to approximate the global optimum that has been observed by all particles during the run. Consider as the index of best position with the lowest amount of objective function in

, in a certain number of repetition

, the formula is as below:

The velocity and position updating equations in the PSO technique are as follows:

where in the above equations represents the number of repetitions,

and

are random values with the uniform distribution in the interval of [0,1], and

and

are weighting factors which

is the personal learning factor and

is the social learning factor.

It should be noted that the next version of this equation is affected by each of and

factors individually (Shi & Eberhart, Citation1998b). Inertia weight factor

is embedded in order to consider the effect of velocity

during the run of the algorithm. Therefore, it is desirable that the value of

to be decreased by time. In general, the linear reduction of factor

is calculated as follows (Sun et al., Citation2011):

where represents the number of repetitions,

and

are upper and lower limits of

factor and

is the total number of repetitions. The weight factor in the convergence of particles plays a vital role. This factor adjusts the contrast between the capability of the population in local search and global search (Parsopoulos, Citation2010).

In this paper, EquationEquations (8(8)

(8) ) and (Equation9

(9)

(9) ) show that

and

linearly have been changed (Amini et al., Citation2019):

where and

are the minimum and maximum values of the personal learning factor, and

and

are the minimum and maximum values of the social learning factor.

3. Halton sequence

The Halton number sequence is created by Van der Corput sequences. In the production of this sequence, every nonnegative integer can be written uniquely using a prime base

as follows:

where . Then for the r-ray expansion in the EquationEquation (10)

(10)

(10) , the function

defined as follows:

The EquationEquation (11)(11)

(11) is called radical inverse function base

. The obtained sequence

is known as Van der Corput sequence. Halton extended this definition and generated a set of points in s-dimensional space as follows:

where are

distinct prime numbers (Fasshauer, Citation2007).

3.1. The scrambled Halton sequence

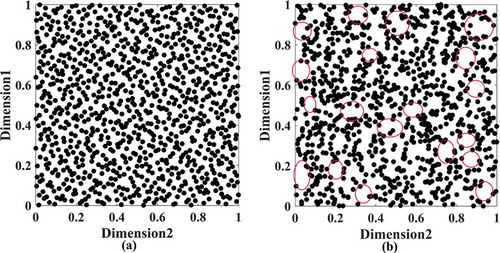

The main problem of the standard Halton sequence as discussed is when the number of dimensions increased a strong correlation occurred. It is caused by the cycle of size the prime number . So in conditions which two big prime-based sequences with large dimensions coupled, the result for this S-dimensional cube is looked as located on parallel straight lines. As an example, the 14th dimension exposes number 43 and the 15th dimension which exposes prime number 47 include 43 and 47 rising numbers, respectively. This produces a correlation between these two numbers coordinates of the sequence. This is shown in Figure . The results for Halton Sequence in large dimensions are lost their unification as shown.

Several methods have been proposed to improve the unification of the standard Halton sequence with large dimensions. The main approach is scrambled the cycles of length number to split the correlations among the coordinates of the standard Halton sequence. This is achieved by the combination of the coefficients

in the radical inverse function of EquationEquation (10)

(10)

(10) . The consequence of scrambled Halton sequence is proposed as:

where is the operator of the combination on the numbers of the expansion

(the standard Halton sequence is the unique example of the scrambled Halton sequence with no scrambling of the numbers

. Many researchers (Braaten & Weller, Citation1979; Hellekalek, Citation1984,; Kocis & Whiten, Citation1997) have proposed the variety of methods for achieving the combination of the coefficients

in EquationEquation (13)

(13)

(13) . The algorithm adopted in the current study corresponds to that of Kocis & Whiten (Citation1997). Figure exposes the unification covering of the scrambled Halton sequence relative to the standard Halton sequence.

4. Proposed method: Halton-PSO

The first and one of the most essential steps which should be considered at the beginning of the PSO is how to scatter particles in search space. It is very important that initial particles cover the search space properly and if any mistakes take place in this step, the whole method will not efficiently work. To prevent this issue, the researchers proposed the different approaches in the initial step of the optimization process for covering the search space based on the specification of a cost function (Eberhart et al., Citation2001; Kennedy & Eberhart, Citation1995). To develop PSO, most of the researchers concentrate to change and improve the main structure of PSO and its coefficient such as . Thus, the initialization of PSO has not been investigated like other sections of the algorithm.

Some of the previous studies commonly consider the initial points without any specific method and usually, randomly (Kennedy & Eberhart, Citation1995). So, it has seen there is a great potential to investigate in the step of the PSO initialization. This could be considered to implement new mathematical methods and algorithms to improve the initialization at the beginning of PSO. In this paper, the scrambled Halton sequence has been used to produce diverse solutions and increase the exploration capability of PSO in the beginning steps of the optimization process. This statistic sequence helps PSO to generate initial particles, which are more reliable than random points. For better understanding, Figure is drawn to demonstrate the effect of the Halton sequence in generating the initial population. This figure obviously illustrates the search space covering with 1000 particles in the initialization of the PSO algorithm with the use of the Halton sequence in the Halton-PSO, and a random function already utilized in previous researches. In Figure ), there are several areas that are not covered with particles and shown by circles. These areas are covered as shown in Figure ) properly. It is clear that the feasible space is searched by Halton-PSO to find the best solution very well. The simplicity of PSO encourages the scientist to apply it to a wide range of benchmark functions and engineering problems. The Halton sequence managed a part of this algorithm and do not impose any complicated statement or equation on PSO. So, the main characteristics of PSO still are untouched. On the other hand, the Halton-PSO establishes a general perspective on the effectiveness of initial points and makes it easy to compare and analyses the results of each method. According to the theory of the scrambled Halton sequence discussed in Section 3, it is revealed that this method faces no problem when the dimensions of a problem are increased.

The Halton-PSO can be described by following pseudo-code:

Generate an initial population with the scrambled Halton sequence (instead of using random numbers) for better covering of feasible space at the beginning of the PSO.

Repeat

For i = 1 to population size do

if <

then

;

= arg min (

);

For j = 1 to D do

Velocity update with EquationEquation (1)(1)

(1) ;

Position update with EquationEquation (2)(2)

(2) ;

End//end for loop j

End//end for loop i

Until the termination criterion is met

5. Results and discussion

In this section, the Halton-PSO technique is applied for solving the different benchmark functions. The results of the Halton-PSO have been compared with other algorithms.

5.1. Parameter setting of Halton-PSO

The adjustable parameters of the Halton-PSO are set according to Table .

Table 1. Parameter settings for Halton-PSO

5.2. Benchmark functions

In this section, 11 benchmark formula for each function is presented (Mirjalili & Lewis, Citation2016). In Table , for each benchmark function utilized in this study, the mathematical formula, range of decision variables and the minimum value are reported. In the first part of Table , F1 to F5 functions are related to the unimodal test functions. These functions have only one global optimum point and are excellent to test the convergence rate and the exploitation capability of an optimization algorithm. On the other hand, F6 to F11 test functions are multimodal benchmark functions. They have different local minima solutions in addition to the global optimum point. These functions are ideal for evaluating the exploration because they have multi-local minimum solutions that could test algorithms (Mirjalili & Lewis, Citation2016).

Table 2. The benchmark functions

5.3. Comparison results of Halton-PSO with the PSO Families and the other optimization algorithms

In this section, the results of the Halton-PSO algorithm are compared with other different versions of the PSO algorithm and the other methods. To achieve this purpose, the various functions introduced in Table are used. The PSO family algorithms and the other metaheuristic algorithms that their results are presented in this paper are PSO (Kennedy & Eberhart, Citation1995), HPSO-TVAC (Ratnaweera et al., Citation2004), HEPSO (Mahmoodabadi et al., Citation2014), WOA (Mirjalili & Lewis, Citation2016), DA (Mirjalili, Citation2016), SCA (Mirjalili, Citation2016), GA (Coello, Citation2000), ALO (Mirjalili, Citation2015a), MFO (Mirjalili, Citation2015b), and MVO (Mirjalili et al., Citation2016). Because of stochastic nature in meta-heuristic algorithms, their results might be suspicious for only one run. So, for comprising each algorithm, each benchmark functions presented in Table used for 30 independent runs and the results are shown in Table . The results are reported in Table by statistical characteristics such as mean and standard deviation to compare easily these algorithms with each other.

Table 3. Comparison results of Halton-PSO with PSO families and other optimization algorithms

In this paper, by comparing the results of the benchmark functions, the capability of each algorithm to evaluate the solutions in the search space has been revealed. The results of the algorithms presented in Table . The Halton-PSO is ranked 1 in F1, F2, F3, F5, F8, F9, and F10 functions. PSO ranked 1 in F4, GA in F6, HPSO-TVAC in F7 and HEPSO in F11. It is noticeable that in those benchmark functions which the Halton-PSO could not rank in the first place, it achieved to reach the 2nd position which is satisfaction. Therefore, by inspecting the results presented in Table , it is concluded that the Halton-PSO method is more efficacious than the other methods to find the best solution in the benchmark functions.

Performance ranking of the algorithms (i.e. the mean best values), as shown in Table , provides a specific opportunity to compare the algorithms. It is obvious that Halton-PSO reaches 15 in total rank and places on the top of the algorithms among other methods. The second position is taken by PSO and HPSO-TVAC algorithms with the total rank of 45. By inspecting the Table , it is clear that the total rank of other algorithms are at least triple in number and this shows the efficiency of the Halton-PSO.

Table 4. Ranking of the algorithmic performances

5.3.1. Analysis of convergence behaviour

In the first steps of the optimization procedure, the particles should explore the entire design space to produce different solutions and then smoothly converge to the near best solution (Nezamivand Chegini et al., Citation2018). This has been done to guarantee the capability of the method to search and find the global optimum. To inspect this behavior in the Halton-PSO algorithm in all optimization stages, the variations of the first dimension of the first particle are shown in Figure . These fluctuations are exposed to F1, F2, F4, F5, F6, and F10 functions. From Figure , it is clear that in the first steps of the method, there are severe variations and then these variations are decreased. In fact, this particle searches the design space for a solution in the initial stages of the optimization process and then converges around the best solution in the final steps.

Since it is not possible to judge the improvement of the position of all particles in the optimization process by examining the behavior of a particular particle, therefore, it is necessary to study the behavior of all particles during the optimization procedure (Nezamivand Chegini et al., Citation2018). To reach this goal, the average fitness of all solutions is shown in Figure . The results are evaluated for F1, F3, F9, and F10 functions.

As shown in Figure , in early steps, a good exploration behavior is occurred by particles and after that variations are gradually decreased, and finally, exploitation behavior occurs and the average fitness has a very few changes.

After investigating the behavior of all particles in the Halton-PSO, now the convergence rate of the Halton-PSO is investigated. The average fitness of best solutions during the optimization process for the benchmark functions is illustrated in Figures and . These two figures demonstrate the rate of convergence for the most 5 highest ranked algorithms according to Table .

In Figure , the results are illustrated for convergence curves of the unimodal test functions. In F1, F2, F3, and F5, as the Halton-PSO algorithm ranked first among other algorithms, it improves the solutions continuously and converges to the best solution in the last stages. The rate of convergence of other methods progresses slowly. The Halton-PSO method reaches the optimal solution for F1 to F5 at 18000, 85000, 40000, 50000, and 175000 FEs (Function Evaluations), respectively. It also shows the power of exploitation of the proposed method in the last steps. Also, in these functions, the Halton-PSO has a smooth convergence rate which is very good and shows the ability of this method in the convergence. In fact, the Halton-PSO initial particles search the feasible space in the first of the process very well and this helps this algorithm to convergence better than other methods. In the F4, as the PSO method ranked first, it reaches the best rate of convergence among other methods while the Halton-PSO track the same rate and is very close to the PSO after near 50000 FEs.

In Figure , the convergence curves of 6 multimodal functions are presented. As it shows, the convergence curves of the responses obtained by the five algorithms for F8 and F9 are very similar. In the F6 and F7, the solutions of Halton-PSO smoothly converge to the best solution. In the F8 and F9, all of the methods are converged to the solution with approximately a similar rate and the Halton-PSO is also ranked as the first one. In the last two functions, HEPSO and the Halton-PSO show the best convergence rate among the other methods. Also, the Halton-PSO acquires the best optimal results in F6 to F12 at 20000, 30000, 40000, 30000, 40000, and 45000 FEs, respectively.

From Figures and , it is obviously clear that the algorithms HEPSO, MVO, PSO, HPSO-TVAC, and the Halton-PSO could reach the global minimum as mentioned in Table . Also, it is seen that in the cases where the Halton-PSO placed the first rank among the other methods, it has also a good and satisfied convergence rate. Finally, the proposed algorithm reaches the best solution and determine it in most test functions.

6. Halton-PSO for classical engineering problems

The real engineering problems are common approaches for evaluating a meta-heuristic algorithm. Contrary to benchmark functions, most of the engineering problems solutions are unknown. Furthermore, real engineering problems have many various constraints (e.g. equality and inequality constraints). So, the proposed algorithm should be evaluated by constrained problems.

In this section, 7 real nonlinear engineering problems are shown to test the Halton-PSO technique: a gear train design, a pressure vessel design problem, a compression/tension spring, minimization of vertical deflection of an I-Beam, a speed reducer design, a truss design problem with three-bar and a welded beam design problem.

There are various penalty functions that could be used for solving these problems (Coello Coello, Citation2002). Since the main goal of this paper is not to search for a type of penalty functions, the minimalistic penalty function (the death penalty) has been used. The amount of computation of this approach compared to other penalty functions is very low and the establishment is very simple to evaluate the real constrained optimization problems (Coello Coello, Citation2002).

6.1. Gear train design problem

The gear train problem is one of the most well-known unconstrained engineering optimization problems in mechanics. The main goal of this issue is to minimize the ratio of gear as shown in Figure . The schematic of this problem has been exposed in Figure .

The nA, nB, nC and nD parameters are the tooth numbers of gears which are the design variables of this engineering optimization problem (Gandomi, Citation2014; Sandgren, Citation1990). The mathematical formula for this problem is provided in Table in Appendix.

The gear train design is clearly a discrete problem. After evaluating the problem variables, they are round to the nearest integer number and then the objective function has been calculated. Table provides the results of the Halton-PSO algorithm and the other methods in detail. The results are compared with CS (Gandomi et al., Citation2013), ALO (Mirjalili, Citation2015a), MFO (Mirjalili, Citation2015b), MVO (Mirjalili et al., Citation2016), ISA (Gandomi, Citation2014), MBA (Sadollah et al., Citation2013), GA (Coello, Citation2000), ABC (Sharma et al., Citation2012), and ALM (Kannan & Kramer, Citation1994). The term NA means that the values of the design variables are not available. From this table, it is clear that the results of optimal gear ratio obtained by the Halton-PSO algorithm are similar to the other algorithms (CS (Gandomi et al., Citation2013), ALO (Mirjalili, Citation2015a), MFO (Mirjalili, Citation2015b), MVO (Mirjalili et al., Citation2016), ISA (Gandomi, Citation2014), and MBA (Sadollah et al., Citation2013). These results show the efficiency of the Halton-PSO algorithm for solving the engineering discrete problems.

Table 5. Comparison result of the gear train design problem (NA means not available)

6.2. Pressure vessel design

Minimization of the construction cost of pressure vessel design is the main goal of this engineering problem. These costs include materials, shaping and welding, and associate with geometric parameters of the pressure vessel. So, to reach the minimum cost for design, calculation of the optimal values of the geometric parameters (as shown in Figure ) is necessary. The formulation of these problems is provided in Table in Appendix (Mirjalili & Lewis, Citation2016).

Table provides the results of Halton-PSO method and the other algorithms (GSA (Mirjalili & Lewis, Citation2016), WOA (Mirjalili & Lewis, Citation2016), MFO (Mirjalili, Citation2015b), MVO (Mirjalili et al., Citation2016), PSO (He & Wang, Citation2007), GA (Coello, Citation2000), ES (Mezura-Montes & Coello Coello, Citation2008), DE (Li et al., Citation2007), and ACO (Kaveh & Talatahari, Citation2010)) for this engineering problem. The efficiency of Halton-PSO is clearly revealed in this problem by providing the best solution among the other algorithms and is able to calculate the best design with the lowest cost.

Table 6. Comparison result for pressure vessel design problem

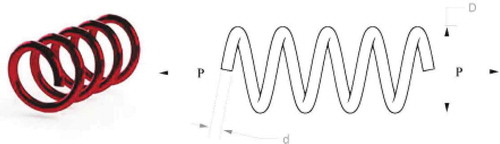

6.3. Tension/compression spring design

Tension/compression spring is the next engineering problem for evaluating by various algorithms. With four different nonlinear constraints, evaluating the best optimal design parameters to minimize the construction cost of a tension/compression spring is required. Wire diameter (d), mean diameter of spring (D), the number of active coils (N) are parameters that should be calculated. These parameters are shown in Figure . The mathematical formulation of this problem with its constraints is shown in Table in Appendix (Mirjalili & Lewis, Citation2016).

This engineering problem was optimized by various optimization algorithms such as GSA (Mirjalili & Lewis, Citation2016), WOA (Mirjalili & Lewis, Citation2016), MFO (Mirjalili, Citation2015b), GWO (Mirjalili et al., Citation2014b), PSO (He & Wang, Citation2007), GA (Coello, Citation2000), ES (Mezura-Montes & Coello Coello, Citation2008), DE (Li et al., Citation2007) and RO (Kaveh & Khayatazad, Citation2012). Table provides the best result for each method in detail. From this table, it is obvious that the Halton-PSO provides the best solution among other algorithms.

Table 7. Comparison result for pressure vessel design problem

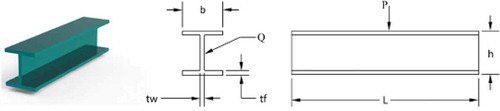

6.4. Minimization of vertical deflection of an I-Beam

Minimization of vertical deflection of an I-Beam is another engineering problem that can appear the capability of Halton-PSO in such cases. This problem has shown in Figure . Finding the optimal geometric parameters related to the cross-section of I-beam shaped is required. Length (b), height (h) and thicknesses and

are the design parameters in this problem. These parameters should be evaluated to reach the cross-section of the beam smaller or equal to 300

. The formulation of this problem is presented in Table in Appendix (Mirjalili, Citation2015b).

This engineering problem was optimized by Halton-PSO and various meta-heuristic optimization algorithms such as CS (Gandomi et al., Citation2013), MFO (Mirjalili, Citation2015b), ARSM and IARSM (Wang, Citation2003), and SOS (Cheng & Prayogo, Citation2014). Table provides the results of this problem for each algorithm. From Table , it is considered that the best results achieved by the Halton-PSO and the MFO.

Table 8. Comparison results for I-beam design problem

6.5. Speed reducer design

Speed reducer design is the next engineering problem that evaluated by Halton-PSO and the other meta-heuristic algorithms. Figure shows a schematic of the problem. The building cost of the speed reducer is the objective function in this problem. Face weight (b), a module of teeth (m), number of teeth on pinion (z), the length of shaft 1 between bearings (), the length of shaft 2 between bearings (l2) are the design parameters that are required to optimize. Nonlinear inequalities and equalities and the objective function are mathematically formulated and introduced in Table in Appendix (Mirjalili et al., Citation2016).

Table reports the results of this engineering problem by using the Halton-PSO algorithm and various optimization techniques (CS (Gandomi et al., Citation2013), TAS (Kuang et al., Citation1998), SBS (Akhtar et al., Citation2002; T Ray & Saini Citation2001; Montes et al., Citation2003). Table shows that Halton-PSO by far has obtained the best optimal cost in comparison with other meta-heuristic methods. On the other hand, results that TAS and Ray and Saini evaluated are violated the constraints and are infeasible. So, CS and SBS are ranked second and third, respectively.

Table 9. Comparison results for speed reducer design

6.6. Truss design problem with three-bar

Another engineering problem that has been solved by the Halton-PSO is the truss design problem with three-bar. Minimization of the truss-weight without exceeding the deformation, buckling and tension constraints is required. In other words, determination of optimal values for a cross-section of and

without violating the constraints is suitable. Figure shows the parameters. The mathematical formulation of this engineering problem is given in Table in Appendix.

Many researchers have implemented many optimization algorithms to evaluate this problem (Gandomi et al., Citation2013; Liu et al., Citation2010; Mirjalili, Citation2015a, Citation2015b; Sadollah et al., Citation2013; Mirjalili et al., Citation2016; Tsai, Citation2005; Zhang et al., Citation2008). Table has shown the capability of Halton-PSO and other meta-heuristic algorithms (CS (Gandomi et al., Citation2013), ALO (Mirjalili, Citation2015a), MFO (Mirjalili, Citation2015b), MVO (Mirjalili et al., Citation2016), MBA (Sadollah et al., Citation2013), DEDS (Zhang et al., Citation2008), Tsa (Tsai, Citation2005), and PSO-DE (Liu et al., Citation2010)) to evaluate the results of optimum cost of this engineering problem. Table shows that the Halton-PSO and some of the other methods such as ALO (Mirjalili, Citation2015a), MVO (Mirjalili et al., Citation2016), DEDS (Zhang et al., Citation2008), and PSO-DE (Liu et al., Citation2010) are very close. This reveals the ability of Halton-PSO to resolve non-linear constrained problems.

Table 10. Comparison results of the three-bar truss design problem

6.7. Welded beam design problem

The last engineering problem of this section is the welded beam design problem as shown in Figure . Shear stress (), bending stress (

), buckling load on the beam (

) and end deflection of the beam (

) are the constraints governing this problem. Weld thickness (h), the clamped bar length (L), the bar height (T) and the bar thickness (b) are four parameters of this problem. This nonlinear engineering optimization problem is mathematically formulated and shown in Table in Appendix (Mirjalili & Lewis, Citation2016).

Table shows the results of this engineering problem obtained by the Halton-PSO and the other optimization methods such as WOA (Mirjalili & Lewis, Citation2016), GSA (Mirjalili & Lewis, Citation2016), MFO (Mirjalili, Citation2015b), MVO (Mirjalili et al., Citation2016), GWO (Mirjalili, Mirjalili $1., Citation2014b), RO (Kaveh & Khayatazad, Citation2012), GA (Coello, Citation2000), Random (Ragsdell & Phillips, Citation1976), and CPSO (Krohling & Dos Santos Coelho, Citation2006). The best optimal cost is evaluated by the Halton-PSO and does not violate any of the constraints. MFO and GWO are ranked second and third algorithms, respectively.

Table 11. Comparison of results for the welded beam design problem

7. Conclusion

Researchers select the initial particles of PSO randomly. This random selection fails to cover the search space as well as required. So, the exploration of the PSO algorithm needs to be better. To solve this issue, the Halton-PSO is proposed in this paper. This method is the combination of the Halton sequence and the original PSO. Then, the Halton-PSO performance compared with some of the different renowned PSO family algorithms. To reach this goal, 11 benchmark functions were used. Analyzing the convergence behavior of the test functions shows that the proposed algorithm is more successful than the other optimization algorithms in most of these functions. Therefore, the exploration problem faced in PSO is improved. On the other hand, the results of the Halton-PSO algorithm showed that this method provides a high-quality solution in design engineering problems with unknown search space. In fact, the capability of this method is proved for solving real constrained engineering problems with various characteristics and conditions.

The main purpose of this paper was to strengthen the initial population production phase in PSO optimization. On the other hand, applying the Halton sequence cannot solve the problems of early convergence and trapping into the local optimum. In fact, these problems can be overcome by enhancing the exploration phase in the early stages and the exploitation phase at the end of the optimization process. The balance between the exploration and exploitation phases during the optimization process is dependent on the velocity and position equations of the PSO algorithm. Combining the Halton sequence with position update equations to prevent early convergence can be recommended as future work.

Additional information

Funding

Notes on contributors

Pouriya Amini Digehsara

This paper is a part of a greater project about signal processing, optimization methods, data mining and fault detection which is undergoing at University of Guilan. This new algorithm will be used for the future work as a section for the optimization part.

References

- Akhtar, S., Tai, K., & Ray, T. (2002). A socio-behavioural simulation model for engineering design optimization. Engineering Optimization, 34(4), 341–29. https://doi.org/10.1080/03052150212723

- Amini, P., Bagheri, A., & Moshfegh, S. (2019, April 1). Interval search with quadratic interpolation and stable deviation quantum-behaved particle swarm optimization (IQS-QPSO). International Journal of Multiphysics, 13(2), 2. https://doi.org/10.21152/1750-9548.13.2.113

- Assareh, E., Behrang, M. A., Assari, M. R., & Ghanbarzadeh, A. (2010). Application of PSO (particle swarm optimization) and GA (genetic algorithm) techniques on demand estimation of oil in Iran. Energy, 35(12), 5223 5229. https://doi.org/10.1016/j.energy.2010.07.043

- Bao, G. Q., & Mao, K. F. (2009). Particle swarm optimization algorithm with asymmetric time varying acceleration coefficients. IEEE ROBIO (pp. 2134–2139). https://doi.org/ 10.1109/ROBIO.2009.5420504

- Braaten, E., & Weller, G. (1979). An improved low-discrepancy sequence for multidimensional quasi-Monte Carlo integration. Journal of Computational Physics, 33(2), 249–258. https://doi.org/10.1016/0021-9991(79)90019-6

- Chau, K. W. (2007). Application of a PSO-based neural network in analysis of outcomes of construction claims. Automation in Construction, 16(5), 642–646. https://doi.org/10.1016/j.autcon.2006.11.008

- Cheng, M.-Y., & Prayogo, D. (2014). Symbiotic organisms search: A new metaheuristic optimization algorithm. Computers & Structures, 139, 98–112. https://doi.org/10.1016/j.compstruc.2014.03.007

- Coello, C. A. (2000). Use of a self-adaptive penalty approach for engineering optimization problems. Computers in Industry, 41(2), 113–127. https://doi.org/10.1016/S0166-3615(99)00046-9

- Coello Coello, C. A. (2002). Theoretical and numerical constraint-handling techniques used with evolutionary algorithms: A survey of the state of the art. Computer Methods in Applied Mechanics and Engineering, 191(11–12), 1245–1287. https://doi.org/10.1016/S0045-7825(01)00323-1

- Cui, Z., Zeng, J., & Yin, Y. (2008). An improved PSO with time–varying accelerator coefficient. Eighth International Conference on Intelligent Systems Design and Applications (pp. 638–643). KAOHSIUNG, TAIWAN. https://doi.org/10.1109/ISDA.2008.86.

- Dong, N., Wu, C. H., Ip, W. H., Chen, Z. Q., Chan, C. Y., & Yung, K. L. (2012, September 1). An opposition-based chaotic GA/PSO hybrid algorithm and its application in circle detection. Computers & Mathematics with Applications, 64(6), 1886–1902. https://doi.org/10.1016/j.camwa.2012.03.040

- Eberhart, R. C., & Kennedy, J. (1995). A new optimizer using particle swarm theory. Proceedings of the SixthInternational Symposium on Micro Machine and Human Science (vol. 1, pp. 39–43). New York. https://doi.org/10.1109/MHS.1995.494215.

- Eberhart, R. C., Shi, Y., & Kennedy, J. (2001). Swarm intelligence (1st ed.). Morgan Kaufmann.

- Fasshauer, G. E. (2007). Meshfree approximation methods with MATLAB. World Scientific.

- Gandomi, A. H. (2014). Interior search algorithm (ISA): A novel approach for global optimization. ISA Transactions, 53(4), 1168–1183. https://doi.org/10.1016/j.isatra.2014.03.018

- Gandomi, A. H., Yang, X. S., & Alavi, A. H. (2013). Cuckoo search algorithm: A metaheuristic approach to solve structural optimization problems. Engineering with Computers, 29(1), 17–35. https://doi.org/10.1007/s00366-011-0241-y

- Ganesha, W., Chi, H., & Cao, Y. (2016). Particle swarm optimization simulation via optimal Halton sequences. Procedia Computer Science, 80, 772–781. https://doi.org/10.1016/j.procs.2016.05.367

- Gray, H. (2016). A hybrid PSO-GA algorithm for constrained optimization problems. Applied Mathematics and Computation, 274, 292–305. https://doi.org/10.1016/j.amc.2015.11.001

- He, Q., & Wang, L. (2007). An effective co-evolutionary particle swarm optimization for constrained engineering design problems. Engineering Applications of Artificial Intelligence, 20(1), 89–99. https://doi.org/10.1016/j.engappai.2006.03.003

- Hellekalek, P. (1984). Regularities in the distribution of special sequences. Journal of Number Theory, 18(1), 41–55. https://doi.org/10.1016/0022-314X(84)90041-6

- Kannan, B., & Kramer, S. K. (1994). An augmented lagrange multiplier based method for mixed integer discrete continuous optimization and its applications to mechanical design. Journal of Mechanical Design, 116, 405–511. https://doi.org/10.1115/1.2919393

- Kaveh, A., & Khayatazad, M. M. (2012). A new meta-heuristic method: Ray optimization. Computers & Structures, 112, 283–294. https://doi.org/10.1016/j.compstruc.2012.09.003

- Kaveh, A., & Talatahari, S. (2010). An improved ant colony optimization for constrained engineering design problems. Engineering Computing International Journa Computer-Aided Engineering, 27, 155–182. https://doi.org/10.1108/02644401011008577

- Kennedy, J., & Eberhart, R. (1995). Particle swarm optimization. Proceedings of the Sixth International Symposium on Micro Machine and Human Science Nagoya (pp. 39–43). Japan.

- Khadanga, R. K., & Satapathy, J. K. (2015). Time delay approach for PSS and SSSC based coordinated controller design using hybrid PSO–GSA algorithm. International Journal of Electrical Power & Energy Systems, 71, 262–273. https://doi.org/10.1016/j.ijepes.2015.03.014

- Kocis, L., & Whiten, W. J. (1997). Computational investigations of low-discrepancy sequences. ACM Transactions on Mathematical Software. Association for Computing Machinery, 23(2), 266–294. https://doi.org/10.1145/264029.264064

- Krohling, R. A., & Dos Santos Coelho, L. (2006). Coevolutionary particle swarm optimization using Gaussian distribution for solving constrained optimization problems. IEEE Transactions System Man, and Cybernetics B, 36(6), 1407–1416. https://10.1109/TSMCB.2006.873185

- Kuang, J. K., Rao, S. S., & Chen, L. (1998). Taguchi-aided search method for design optimization of engineering systems. Engineering Optimization, 30(1), 1–23. https://doi.org/10.1080/03052159808941235

- Li, L., Huang, Z., Liu, F., & Wu, Q. (2007). A heuristic particle swarm optimizer for optimization of pin connected structures. Computers & Structures, 85(7–8), 340–349. https://doi.org/10.1016/j.compstruc.2006.11.020

- Liu, H., Cai, Z., & Wang, Y. (2010). Hybridizing particle swarm optimization with differential evolution for constrained numerical and engineering optimization. Applied Soft Computing, 10(2), 629–640. https://doi.org/10.1016/j.asoc.2009.08.031

- Mahmoodabadi, M. J., Bagheri, A., Nariman-zadeh, N., & Jamali, A. (2012). A new optimization algorithm based on a combination of particle swarm optimization, convergence and divergence operators for singleobjective and multi-objective problems. Engineering Optimization, 44(10), 1167–1186. https://doi.org/10.1080/0305215X.2011.644545

- Mahmoodabadi, M. J., Salahshoor Mottaghi, Z., & Bagheri, A. (2014). HEPSO: High exploration particle swarm optimization. Information Sciences, 273, 101–111. https://doi.org/10.1016/j.ins.2014.02.150

- Mezura-Montes, E., & Coello Coello, C. A. (2008). An empirical study about the usefulness of evolution strategies to solve constrained optimization problems. International Journal of General Systems, 37(4), 443–473. https://doi.org/10.1080/03081070701303470

- Mirjalili, A. S. (2016). Dragonfly algorithm: A new meta-heuristic optimization technique for solving singleobjective, discrete, and multi-objective problems. Neural Computing and Applications, 27(4), 1053–1073. https://doi.org/10.1007/s00521-015-1920-1

- Mirjalili, S. A. (2015a). The ant lion optimizer. Advances in Engineering Software, 83, 80–98. https://doi.org/10.1016/j.advengsoft.2015.01.010

- Mirjalili, S. A. (2015b). Moth-Flame optimization algorithm: A novel nature-inspired heuristic paradigm. Knowledge-Based Systems, 89, 228–249. https://doi.org/10.1016/j.knosys.2015.07.006

- Mirjalili, S. A. (2016). SCA: A sine cosine algorithm for solving optimization problems. Knowledge-Based Systems, 96, 120–133. https://doi.org/10.1016/j.knosys.2015.12.022

- Mirjalili, S. A., & Lewis, A. (2016). The whale optimization algorithm. Advances in Engineering Software, 95, 51–67. https://doi.org/10.1016/j.advengsoft.2016.01.008

- Mirjalili, S. A., Lewis, A., & Sadiq, A. S. (2014a). Autonomous particles groups for particle swarm optimization. Arabian Journal of Sciences Engineering, 39(6), 4683–4697. https://doi.org/10.1007/s13369-014-1156-x

- Mirjalili, S. A., Mirjalili, S. M., & Hatamlou, A. (2016). Multi -verse optimizer: A nature inspired algorithm for global optimization. Neural Computing and Applications, 27(2), 495–513. https://doi.org/10.1007/s00521-015-1870-7

- Mirjalili, S. A., Mirjalili, S. M., & Lewis, A. (2014b). Grey wolf optimizer. Advances in Engineering Software, 69, 46–61. https://doi.org/10.1016/j.advengsoft.2013.12.007

- Mirjalili, S. A., & Mohd Hashim, S. Z. (2010). A new hybrid PSOGSA algorithm for function optimization. International Conference on Computer and Information Application (vol. 377, pp. 374–377).

- Montes, E. M., Coello, C. A. C., & Ricardo, L. (2003). Engineering optimization using a simple evolutionary algorithm. 15th Intl Conf on Tools with Art Intelligence—ICTAI’2003 (pp. 149–156). CA, USA. https://doi.org/10.1109/TAI.2003.1250183

- Nezamivand Chegini, S., Bagheri, A., & Najafi, F. (2018). PSOSCALF: A new hybrid PSO based on Sine Cosine algorithm and Levy flight for solving optimization problems. Applied Soft Computing, 73, 697–726. https://doi.org/10.1016/j.asoc.2018.09.019

- Niederreiter, H. (1992). Random number generation and quasi-Monte Carlo methods. Siam.

- Parsopoulos, K. E., editor. (2010, January 31). Particle swarm optimization and intelligence: Advances and applications: Advances and applications. IGI Global. 10.4018/978-1-61520-666-7

- Premalatha, K., Natarajan, A. M., & Hybrid, P. S. O. (2009). GA for global maximization. International Journal Open Problems Computing Sciences Mathematics, 2, 597–608. www.i-csrs.org

- Ragsdell, K., & Phillips, D. (1976). Optimal design of a class of welded structures using geometric programming. Journal of Engineering for Industry, 98, 1021–1025. https://doi.org/10.1115/1.3438995

- Ratnaweera, A., Halgamuge, S. K., & Watson, H. C. (2004). Self-organizing hierarchical particle swarm optimizer with time-varying acceleration coefficients. IEEE Transactions on Evolutionary Computation, 8, 240–255. https://doi.org/10.1109/TEVC.2004.826071

- Ray, R. N., Chatterjee, D., & Goswami, S. K. (2009). An application of PSO technique for harmonic elimination in a PWM inverter. Applied Soft Computing, 4, 1315–1320. https://doi.org/10.1016/j.asoc.2009.05.002

- Ray, T., & Saini, P. (2001). Engineering design optimization using a swarm with an intelligent information sharing among individuals. Engineering Optimization, 33, 735–748. https://doi.org/10.1080/03052150108940941

- Rui, H., Zhang, C., & Han, X. (2017). Study on application of PSO with time window for user recommendation in research social networks. Proceedings of the BPM conference (pp. 116–120). Barcelona, Spain: ACM.

- SaberChenari, K., Abghari, H., & Tabari, H. (2016). Application of PSO algorithm in short-term optimization of reservoir operation. Environmental Monitoring and Assessment, 188, 667. https://doi.org/10.1007/s10661-016-5689-1

- Sadollah, A., Bahreininejad, A., Eskandar, H., & Hamdi, M. (2013). Mine blast algorithm: A new population based algorithm for solving constrained engineering optimization problems. Applied Soft Computing, 13, 2592–2612. https://doi.org/10.1016/j.asoc.2012.11.026

- Sandgren, E. (1990). Nonlinear integer and discrete programming in mechanical design optimization. Journal Mechanica Design, 112, 223–229. https://doi.org/10.1115/1.2912596

- Sharma, T. K., Pant, M., & Singh, V. (2012). Improved local search in artificial bee colony using golden section search. arXiv Preprint arXiv, 1210.6128. https://arxiv.org/abs/1210.6128v1

- Shi, Y., & Eberhart, R. C. (1998a). Parameter selection in particle swarm optimization. International Conference on Evolutionary Programming (pp. 591–600). https://doi.org/10.1007/BFb0040810

- Shi, Y., & Eberhart, R. C. (1998b). A modified particle swarm optimizer. Proceedings of the IEEE International Conference on Evolutionary Computation (pp. 69–73). Piscataway, NJ: IEEE Press. https://doi.org/10.1109/ICEC.1998.699146.

- Shuang, B., Chen, J., & Li, Z. (2011). Study on hybrid PS-ACO algorithm. Applied Intelligent, 34, 64–73. https://doi.org/10.1007/s10489-009-0179-6

- Song, Y., Chen, Z., & Yuan, Z. (2007, March). New chaotic PSO-based neural network predictive control for nonlinear process. IEEE Transactions on Neural Networks, 18(2), 595–601. https://doi.org/10.1109/TNN.2006.890809

- Spanier, J., & Maize, E. H. (1994). Quasi-random methods for estimating integrals using relatively small samples. SIAM Journal on Applied Mathematics, 36, 18–44. https://doi.org/10.1137/1036002

- Sree Ranjini, K. S., & Murugan, S. (2017). Memory based hybrid dragonfly algorithm for numerical optimization problems. Expert Systems with Applications, 83, 63–78. https://doi.org/10.1016/j.eswa.2017.04.033

- Sun, J., Fang, W., Palade, V., Wu, X., & Xu, W. (2011). Quantum-behaved particle swarm optimization with Gaussian distributed local attractor point. Applied Mathematics and Computation, 218, 3763–3775. https://doi.org/10.1016/j.amc.2011.09.021

- Tsai, J. F. (2005). Global optimization of nonlinear fractional programming problems in engineering design. Engineering Optimization, 37, 399–409. https://doi.org/10.1080/03052150500066737

- Wang, G. G. (2003). Adaptive response surface method using inherited latin hypercube design points. Journal of Mechanical Design, 125, 210–220. https://doi.org/10.1115/1.1561044

- Xiang, T., Liao, X., & Wong, K. W. (2007, July 15). An improved particle swarm optimization algorithm combined with piecewise linear chaotic map. Applied Mathematics and Computation, 190(2), 1637–1645. https://doi.org/10.1016/j.amc.2007.02.103

- Yang, X. S. (2010a). Engineering optimization: An introduction with metaheuristic applications. John Wiley &Sons.

- Yang, X. S. (2010b). Nature-inspired metaheuristic algorithms (2th ed.). Luniver Press.

- Zhang, M., Luo, W., & Wang, X. (2008). Differential evolution with dynamic stochastic selection for constrained optimization. Information Sciences, 178, 3043–3074. https://doi.org/10.1016/j.ins.2008.02.014

- Zjyu, T., & Dingxue, Z. (2009). A modified particle swarm optimization with an adaptive acceleration coefficients. Asia – Pacific Conference on Information Processing (pp. 330–332). Shenzhen, China. http://doi.ieeecomputersociety.org/10.1109/APCIP.2009.217